Create natural conversations with Amazon Lex QnAIntent and Knowledge Bases for Amazon Bedrock

Customer service organizations today face an immense opportunity. As customer expectations grow, brands have a chance to creatively apply new innovations to transform the customer experience. Although meeting rising customer demands poses challenges, the latest breakthroughs in conversational artificial intelligence (AI) empowers companies to meet these expectations. Customers today expect timely responses to their questions […]

Customer service organizations today face an immense opportunity. As customer expectations grow, brands have a chance to creatively apply new innovations to transform the customer experience. Although meeting rising customer demands poses challenges, the latest breakthroughs in conversational artificial intelligence (AI) empowers companies to meet these expectations.

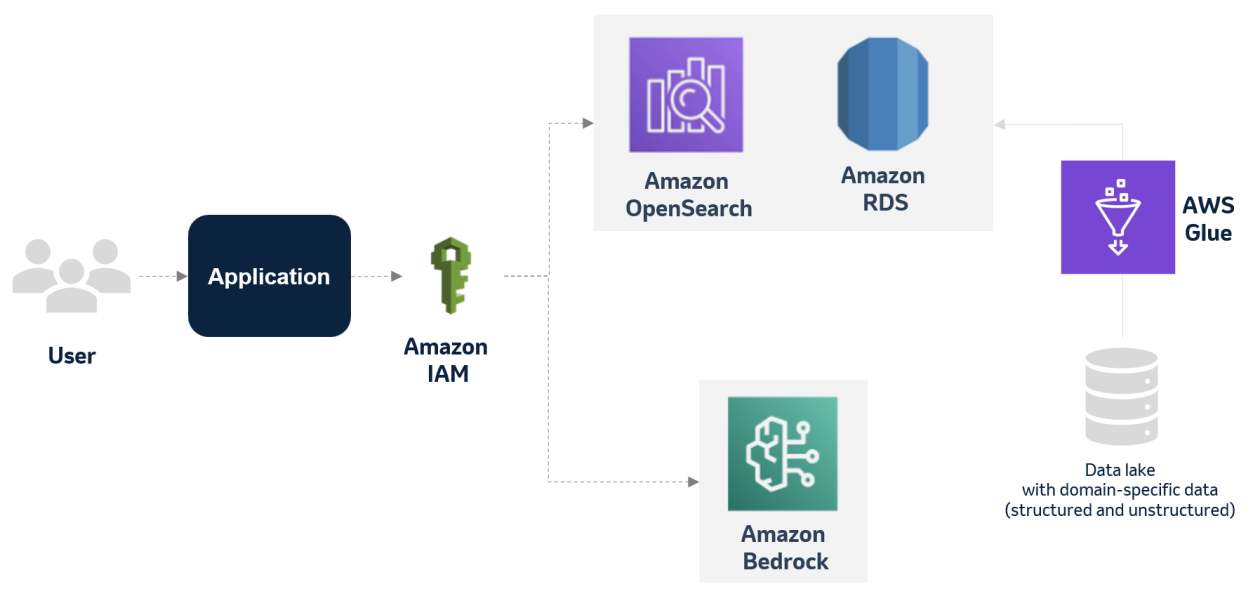

Customers today expect timely responses to their questions that are helpful, accurate, and tailored to their needs. The new QnAIntent, powered by Amazon Bedrock, can meet these expectations by understanding questions posed in natural language and responding conversationally in real time using your own authorized knowledge sources. Our Retrieval Augmented Generation (RAG) approach allows Amazon Lex to harness both the breadth of knowledge available in repositories as well as the fluency of large language models (LLMs).

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies like AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities to build generative AI applications with security, privacy, and responsible AI.

In this post, we show you how to add generative AI question answering capabilities to your bots. This can be done using your own curated knowledge sources, and without writing a single line of code.

Read on to discover how QnAIntent can transform your customer experience.

Solution overview

Implementing the solution consists of the following high-level steps:

- Create an Amazon Lex bot.

- Create an Amazon Simple Storage Service (Amazon S3) bucket and upload a PDF file that contains the information used to answer questions.

- Create a knowledge base that will split your data into chunks and generate embeddings using the Amazon Titan Embeddings model. As part of this process, Knowledge Bases for Amazon Bedrock automatically creates an Amazon OpenSearch Serverless vector search collection to hold your vectorized data.

- Add a new QnAIntent intent that will use the knowledge base to find answers to customers’ questions and then use the Anthropic Claude model to generate answers to questions and follow-up questions.

Prerequisites

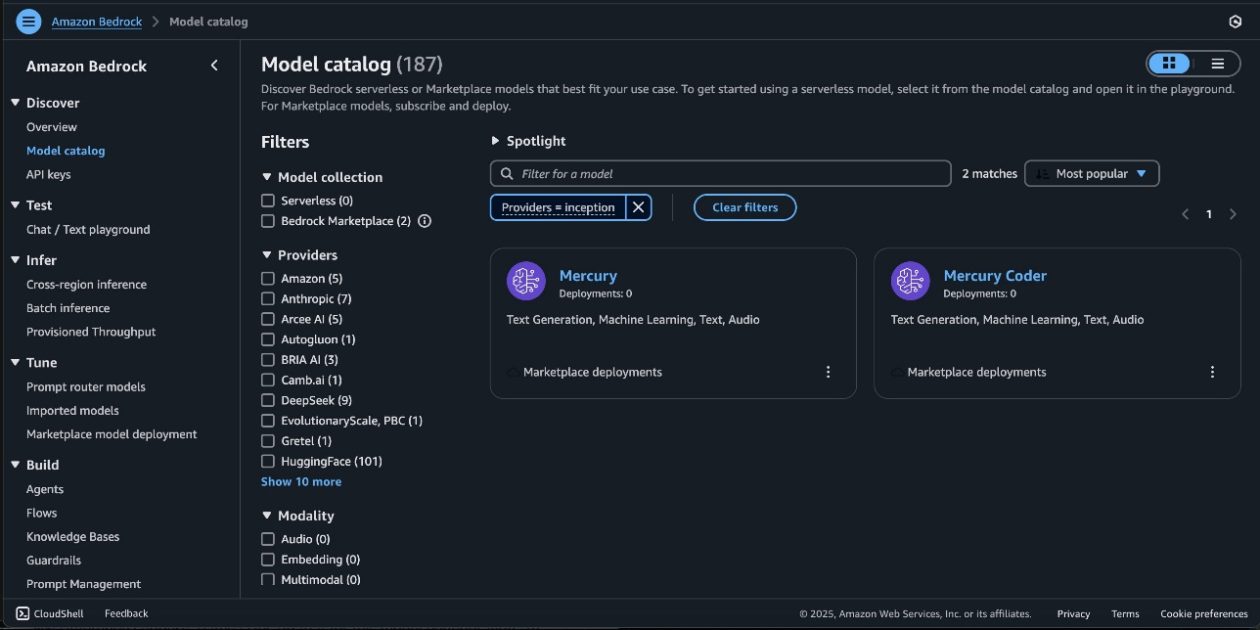

To follow along with the features described in this post, you need access to an AWS account with permissions to access Amazon Lex, Amazon Bedrock (with access to Anthropic Claude models and Amazon Titan embeddings or Cohere Embed), Knowledge Bases for Amazon Bedrock, and the OpenSearch Serverless vector engine. To request access to models in Amazon Bedrock, complete the following steps:

- On the Amazon Bedrock console, choose Model access in the navigation pane.

- Choose Manage model access.

- Select the Amazon and Anthropic models. (You can also choose to use Cohere models for embeddings.)

- Choose Request model access.

Create an Amazon Lex bot

If you already have a bot you want to use, you can skip this step.

- On the Amazon Lex console, choose Bots in the navigation pane.

- Choose Create bot

- Select Start with an example and choose the BookTrip example bot.

- For Bot name, enter a name for the bot (for example, BookHotel).

- For Runtime role, select Create a role with basic Amazon Lex permissions.

- In the Children’s Online Privacy Protection Act (COPPA) section, you can select No because this bot is not targeted at children under the age of 13.

- Keep the Idle session timeout setting at 5 minutes.

- Choose Next.

- When using the QnAIntent to answer questions in a bot, you may want to increase the intent classification confidence threshold so that your questions are not accidentally interpreted as matching one of your intents. We set this to 0.8 for now. You may need to adjust this up or down based on your own testing.

- Choose Done.

- Choose Save intent.

Upload content to Amazon S3

Now you create an S3 bucket to store the documents you want to use for your knowledge base.

- On the Amazon S3 console, choose Buckets in the navigation pane.

- Choose Create bucket.

- For Bucket name, enter a unique name.

- Keep the default values for all other options and choose Create bucket.

For this post, we created an FAQ document for the fictitious hotel chain called Example Corp FictitiousHotels. Download the PDF document to follow along.

- On the Buckets page, navigate to the bucket you created.

If you don’t see it, you can search for it by name.

- Choose Upload.

- Choose Add files.

- Choose the

ExampleCorpFicticiousHotelsFAQ.pdfthat you downloaded. - Choose Upload.

The file will now be accessible in the S3 bucket.

Create a knowledge base

Now you can set up the knowledge base:

- On the Amazon Bedrock console, choose Knowledge base in the navigation pane.

- Choose Create knowledge base.

- For Knowledge base name¸ enter a name.

- For Knowledge base description, enter an optional description.

- Select Create and use a new service role.

- For Service role name, enter a name or keep the default.

- Choose Next.

- For Data source name, enter a name.

- Choose Browse S3 and navigate to the S3 bucket you uploaded the PDF file to earlier.

- Choose Next.

- Choose an embeddings model.

- Select Quick create a new vector store to create a new OpenSearch Serverless vector store to store the vectorized content.

- Choose Next.

- Review your configuration, then choose Create knowledge base.

After a few minutes, the knowledge base will have been created.

- Choose Sync to sync to chunk the documents, calculate the embeddings, and store them in the vector store.

This may take a while. You can proceed with the rest of the steps, but the syncing needs to finish before you can query the knowledge base.

- Copy the knowledge base ID. You will reference this when you add this knowledge base to your Amazon Lex bot.

Add QnAIntent to the Amazon Lex bot

To add QnAIntent, compete the following steps:

- On the Amazon Lex console, choose Bots in the navigation pane.

- Choose your bot.

- In the navigation pane, choose Intents.

- On the Add intent menu, choose Use built-in intent.

- For Built-in intent, choose AMAZON.QnAIntent.

- For Intent name, enter a name.

- Choose Add.

- Choose the model you want to use to generate the answers (in this case, Anthropic Claude 3 Sonnet, but you can select Anthropic Claude 3 Haiku for a cheaper option with less latency).

- For Choose knowledge store, select Knowledge base for Amazon Bedrock.

- For Knowledge base for Amazon Bedrock Id, enter the ID you noted earlier when you created your knowledge base.

- Choose Save Intent.

- Choose Build to build the bot.

- Choose Test to test the new intent.

The following screenshot shows an example conversation with the bot.

In the second question about the Miami pool hours, you refer back to the previous question about pool hours in Las Vegas and still get a relevant answer based on the conversation history.

It’s also possible to ask questions that require the bot to reason a bit around the available data. When we asked about a good resort for a family vacation, the bot recommended the Orlando resort based on the availability of activities for kids, proximity to theme parks, and more.

Update the confidence threshold

You may have some questions accidentally match your other intents. If you run into this, you can adjust the confidence threshold for your bot. To modify this setting, choose the language of your bot (English) and in the Language details section, choose Edit.

After you update the confidence threshold, rebuild the bot for the change to take effect.

Add addional steps

By default, the next step in the conversation for the bot is set to Wait for user input after a question has been answered. This keeps the conversation in the bot and allows a user to ask follow-up questions or invoke any of the other intents in your bot.

If you want the conversation to end and return control to the calling application (for example, Amazon Connect), you can change this behavior to End conversation. To update the setting, complete the following steps:

- On the Amazon Lex console, navigate to the QnAIntent.

- In the Fulfillment section, choose Advanced options.

- On the Next step in conversation dropdown menu, choose End conversation.

If you would like the bot add a specific message after each response from the QnAIntent (such as “Can I help you with anything else?”), you can add a closing response to the QnAIntent.

Clean up

To avoid incurring ongoing costs, delete the resources you created as part of this post:

- Amazon Lex bot

- S3 bucket

- OpenSearch Serverless collection (This is not automatically deleted when you delete your knowledge base)

- Knowledge bases

Conclusion

The new QnAIntent in Amazon Lex enables natural conversations by connecting customers with curated knowledge sources. Powered by Amazon Bedrock, the QnAIntent understands questions in natural language and responds conversationally, keeping customers engaged with contextual, follow-up responses.

QnAIntent puts the latest innovations in reach to transform static FAQs into flowing dialogues that resolve customer needs. This helps scale excellent self-service to delight customers.

Try it out for yourself. Reinvent your customer experience!

About the Author

Thomas Rinfuss is a Sr. Solutions Architect on the Amazon Lex team. He invents, develops, prototypes, and evangelizes new technical features and solutions for Language AI services that improves the customer experience and eases adoption.

Thomas Rinfuss is a Sr. Solutions Architect on the Amazon Lex team. He invents, develops, prototypes, and evangelizes new technical features and solutions for Language AI services that improves the customer experience and eases adoption.

![[PRO Tips] Use the BCG matrix to help you analyze the current situation, product positioning, and formulate strategies](https://i.scdn.co/image/ab6765630000ba8a165b48c48c4321b36a1df7b9?#)

![[Business Talk] BYD's Hiring Standards: A Reflection of China's Competitive Job Market](https://i.scdn.co/image/ab6765630000ba8a1a1e0af3aefae3a685793e7c?#)

![[PRO Tips] What is ESG? How is it different from CSR and SDGs? 3 keywords that companies and investors should know](https://i.scdn.co/image/ab6765630000ba8a76dbe129993a62e85226c2b4?#)

![[Business Talk] Elon Musk](https://i.scdn.co/image/ab6765630000ba8ac91eb094519def31d2b67898?#)