Power Your LLM Training and Evaluation with the New SageMaker AI Generative AI Tools

Today we are excited to introduce the Text Ranking and Question and Answer UI templates to SageMaker AI customers. In this blog post, we’ll walk you through how to set up these templates in SageMaker to create high-quality datasets for training your large language models.

Today we are excited to introduce the Text Ranking and Question and Answer UI templates to SageMaker AI customers. The Text Ranking template enables human annotators to rank multiple responses from a large language model (LLM) based on custom criteria, such as relevance, clarity, or factual accuracy. This ranked feedback provides critical insights that help refine models through Reinforcement Learning from Human Feedback (RLHF), generating responses that better align with human preferences. The Question and Answer template facilitates the creation of high-quality Q&A pairs based on provided text passages. These pairs act as demonstration data for Supervised Fine-Tuning (SFT), teaching models how to respond to similar inputs accurately.

In this blog post, we’ll walk you through how to set up these templates in SageMaker to create high-quality datasets for training your large language models. Let’s explore how you can leverage these new tools.

Text Ranking

The Text Ranking template allows annotators to rank multiple text responses generated by a large language model based on customizable criteria such as relevance, clarity, or correctness. Annotators are presented with a prompt and several model-generated responses, which they rank according to guidelines specific to your use case. The ranked data is captured in a structured format, detailing the re-ranked indices for each criterion, such as “clarity” or “inclusivity.” This information is invaluable for fine-tuning models using RLHF, aligning the model outputs more closely with human preferences. In addition, this template is also highly effective for evaluating the quality of LLM outputs by allowing you to see how well responses match the intended criteria.

Setting Up in the SageMaker AI Console

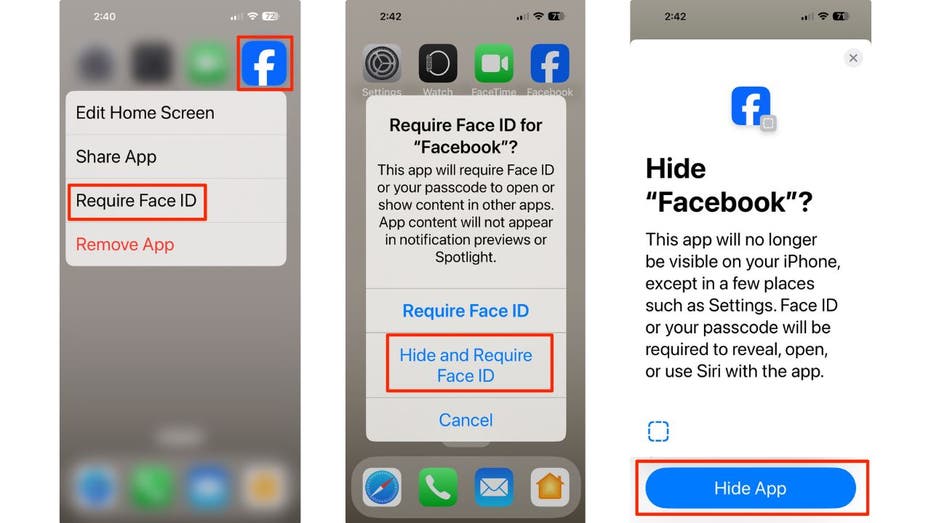

A new Generative AI category has been added under Task Type in the SageMaker AI console, allowing you to select these templates. To configure the labeling job using the AWS Management Console, complete the following steps:

- On the SageMaker AI console, under Ground Truth in the navigation pane, choose Labeling job.

- Choose Create labeling job.

- Specify your input manifest location and output path. To configure the Text Ranking input file, use the Manual Data Setup under Create Labeling Job and input a JSON file with the prompt stored under the source field, while the list of model responses is placed under the responses field. Text Ranking does not support Automated Data Setup.

Here is an example of our input manifest file:

Upload this input manifest file into your S3 location and provide the S3 path to this file under Input dataset location:

- Select Generative AI as the task type and choose the Text Ranking UI.

- Choose Next.

- Enter your labeling instructions. Enter the dimensions you want to include in the Ranking dimensions section. For example, in the image above, the dimensions are Helpfulness and Clarity, but you can add, remove, or customize these based on your specific needs by clicking the “+” button to add new dimensions or the trash icon to remove them. Additionally, you have the option to allow tie rankings by selecting the checkbox. This option enables annotators to rank two or more responses equally if they believe the responses are of the same quality for a particular dimension.

- Choose Preview to display the UI template for review.

- Choose Create to create the labeling job.

When the annotators submit their evaluations, their responses are saved directly to your specified S3 bucket. The output manifest file includes the original data fields and a worker-response-ref that points to a worker response file in S3. This worker response file contains the ranked responses for each specified dimension, which can be used to fine-tune or evaluate your model’s outputs. If multiple annotators have worked on the same data object, their individual annotations are included within this file under an answers key, which is an array of responses. Each response includes the annotator’s input and metadata such as acceptance time, submission time, and worker ID. Here is an example of the output json file containing the annotations:

Question and Answer

The Question and Answer template allows you to create datasets for Supervised Fine-Tuning (SFT) by generating question-and-answer pairs from text passages. Annotators read the provided text and create relevant questions and corresponding answers. This process acts as a source of demonstration data, guiding the model on how to handle similar tasks. The template supports flexible input, letting annotators reference entire passages or specific sections of text for more targeted Q&A. A color-coded matching feature visually links questions to the relevant sections, helping streamline the annotation process. By using these Q&A pairs, you enhance the model’s ability to follow instructions and respond accurately to real-world inputs.

Setting Up in the SageMaker AI Console

The process for setting up a labeling job with the Question and Answer template follows similar steps as the Text Ranking template. However, there are differences in how you configure the input file and select the appropriate UI template to suit the Q&A task.

- On the SageMaker AI console, under Ground Truth in the navigation pane, choose Labeling job.

- Choose Create labeling job.

- Specify your input manifest location and output path. To configure the Question and Answer input file, use the Manual Data Setup and upload a JSON file where the source field contains the text passage. Annotators will use this text to generate questions and answers. Note that you can load the text from a .txt or .csv file and use Ground Truth’s Automated Data Setup to convert it to the required JSON format.

Here is an example of an input manifest file:

Upload this input manifest file into your S3 location and provide the S3 path to this file under Input dataset location

- Select Generative AI as the task type and choose the Question and Answer UI

- Choose Next.

- Enter your labeling instructions. You can configure additional settings to control the task. You can specify the minimum and maximum number of Q&A pairs that workers should generate from the provided text passage. Additionally, you can define the minimum and maximum word counts for both the question and answer fields, so that the responses fit your requirements. You can also add optional question tags to categorize the question and answer pairs. For example, you might include tags such as “What,” “How,” or “Why” to guide the annotators in their task. If these predefined tags are insufficient, you have the option to allow workers to enter their own custom tags by enabling the Allow workers to specify custom tags feature. This flexibility facilitates annotations that meet the specific needs of your use case.

- Once these settings are configured, you can choose to Preview the UI to verify that it meets your needs before proceeding.

- Choose Create to create the labeling job.

When annotators submit their work, their responses are saved directly to your specified S3 bucket. The output manifest file contains the original data fields along with a worker-response-ref that points to the worker response file in S3. This worker response file includes the detailed annotations provided by the workers, such as the ranked responses or question-and-answer pairs generated for each task.

Here’s an example of what the output might look like:

CreateLabelingJob API

In addition to creating these labeling jobs through the Amazon SageMaker AI console, customers can also use the Create Labeling Job API to set up Text Ranking and Question and Answer jobs programmatically. This method provides more flexibility for automation and integration into existing workflows. Using the API, you can define job configurations, input manifests, and worker task templates, and monitor the job’s progress directly from your application or system.

For a step-by-step guide on how to implement this, you can refer to the following notebooks, which walk through the entire process of setting up Human-in-the-Loop (HITL) workflows for Reinforcement Learning from Human Feedback (RLHF) using both the Text Ranking and Question and Answer templates. These notebooks will guide you through setting up the required Ground Truth pre-requisites, downloading sample JSON files with prompts and responses, converting them to Ground Truth input manifests, creating worker task templates, and monitoring the labeling jobs. They also cover post-processing the results to create a consolidated dataset with ranked responses.

Conclusion

With the introduction of the Text Ranking and Question and Answer templates, Amazon SageMaker AI empowers customers to generate high-quality datasets for training large language models more efficiently. These built-in capabilities simplify the process of fine-tuning models for specific tasks and aligning their outputs with human preferences, whether through supervised fine-tuning or reinforcement learning from human feedback. By leveraging these templates, you can better evaluate and refine your models to meet the needs of your specific application, helping achieve more accurate, reliable, and user-aligned outputs. Whether you’re creating datasets for training or evaluating your models’ outputs, SageMaker AI provides the tools you need to succeed in building state-of-the-art generative AI solutions.To begin creating fine-tuning datasets with the new templates:

- Visit the Amazon SageMaker AI console.

- Refer to the SageMaker AI APIs for programmatic access.

- Use the AWS CLI for command-line interactions.

About the authors

Sundar Raghavan is a Generative AI Specialist Solutions Architect at AWS, helping customers use Amazon Bedrock and next-generation AWS services to design, build and deploy AI agents and scalable generative AI applications. In his free time, Sundar loves exploring new places, sampling local eateries and embracing the great outdoors.

Sundar Raghavan is a Generative AI Specialist Solutions Architect at AWS, helping customers use Amazon Bedrock and next-generation AWS services to design, build and deploy AI agents and scalable generative AI applications. In his free time, Sundar loves exploring new places, sampling local eateries and embracing the great outdoors.

Jesse Manders is a Senior Product Manager on Amazon Bedrock, the AWS Generative AI developer service. He works at the intersection of AI and human interaction with the goal of creating and improving generative AI products and services to meet our needs. Previously, Jesse held engineering team leadership roles at Apple and Lumileds, and was a senior scientist in a Silicon Valley startup. He has an M.S. and Ph.D. from the University of Florida, and an MBA from the University of California, Berkeley, Haas School of Business.

Jesse Manders is a Senior Product Manager on Amazon Bedrock, the AWS Generative AI developer service. He works at the intersection of AI and human interaction with the goal of creating and improving generative AI products and services to meet our needs. Previously, Jesse held engineering team leadership roles at Apple and Lumileds, and was a senior scientist in a Silicon Valley startup. He has an M.S. and Ph.D. from the University of Florida, and an MBA from the University of California, Berkeley, Haas School of Business.

Niharika Jayanti is a Front-End Engineer at Amazon, where she designs and develops user interfaces to delight customers. She contributed to the successful launch of LLM evaluation tools on Amazon Bedrock and Amazon SageMaker Unified Studio. Outside of work, Niharika enjoys swimming, hitting the gym and crocheting.

Niharika Jayanti is a Front-End Engineer at Amazon, where she designs and develops user interfaces to delight customers. She contributed to the successful launch of LLM evaluation tools on Amazon Bedrock and Amazon SageMaker Unified Studio. Outside of work, Niharika enjoys swimming, hitting the gym and crocheting.

Muyun Yan is a Senior Software Engineer at Amazon Web Services (AWS) SageMaker AI team. With over 6 years at AWS, she specializes in developing machine learning-based labeling platforms. Her work focuses on building and deploying innovative software applications for labeling solutions, enabling customers to access cutting-edge labeling capabilities. Muyun holds a M.S. in Computer Engineering from Boston University.

Muyun Yan is a Senior Software Engineer at Amazon Web Services (AWS) SageMaker AI team. With over 6 years at AWS, she specializes in developing machine learning-based labeling platforms. Her work focuses on building and deploying innovative software applications for labeling solutions, enabling customers to access cutting-edge labeling capabilities. Muyun holds a M.S. in Computer Engineering from Boston University.

Kavya Kotra is a Software Engineer on the Amazon SageMaker Ground Truth team, helping build scalable and reliable software applications. Kavya played a key role in the development and launch of the Generative AI Tools on SageMaker. Previously, Kavya held engineering roles within AWS EC2 Networking, and Amazon Audible. In her free time, she enjoys painting, and exploring Seattle’s nature scene.

Kavya Kotra is a Software Engineer on the Amazon SageMaker Ground Truth team, helping build scalable and reliable software applications. Kavya played a key role in the development and launch of the Generative AI Tools on SageMaker. Previously, Kavya held engineering roles within AWS EC2 Networking, and Amazon Audible. In her free time, she enjoys painting, and exploring Seattle’s nature scene.

Alan Ismaiel is a software engineer at AWS based in New York City. He focuses on building and maintaining scalable AI/ML products, like Amazon SageMaker Ground Truth and Amazon Bedrock. Outside of work, Alan is learning how to play pickleball, with mixed results.

Alan Ismaiel is a software engineer at AWS based in New York City. He focuses on building and maintaining scalable AI/ML products, like Amazon SageMaker Ground Truth and Amazon Bedrock. Outside of work, Alan is learning how to play pickleball, with mixed results.

![[PRO Tips] Use the BCG matrix to help you analyze the current situation, product positioning, and formulate strategies](https://i.scdn.co/image/ab6765630000ba8a165b48c48c4321b36a1df7b9?#)

![[Business Talk] BYD's Hiring Standards: A Reflection of China's Competitive Job Market](https://i.scdn.co/image/ab6765630000ba8a1a1e0af3aefae3a685793e7c?#)

![[PRO Tips] What is ESG? How is it different from CSR and SDGs? 3 keywords that companies and investors should know](https://i.scdn.co/image/ab6765630000ba8a76dbe129993a62e85226c2b4?#)

![[Business Talk] Elon Musk](https://i.scdn.co/image/ab6765630000ba8ac91eb094519def31d2b67898?#)