Uphold ethical standards in fashion using multimodal toxicity detection with Amazon Bedrock Guardrails

In the fashion industry, teams are frequently innovating quickly, often utilizing AI. Sharing content, whether it be through videos, designs, or otherwise, can lead to content moderation challenges. There remains a risk (through intentional or unintentional actions) of inappropriate, offensive, or toxic content being produced and shared. In this post, we cover the use of the multimodal toxicity detection feature of Amazon Bedrock Guardrails to guard against toxic content. Whether you’re an enterprise giant in the fashion industry or an up-and-coming brand, you can use this solution to screen potentially harmful content before it impacts your brand’s reputation and ethical standards. For the purposes of this post, ethical standards refer to toxic, disrespectful, or harmful content and images that could be created by fashion designers.

The global fashion industry is estimated to be valued at $1.84 trillion in 2025, accounting for approximately 1.63% of the world’s GDP (Statista, 2025). With such massive amounts of generated capital, so too comes the enormous potential for toxic content and misuse.

In the fashion industry, teams are frequently innovating quickly, often utilizing AI. Sharing content, whether it be through videos, designs, or otherwise, can lead to content moderation challenges. There remains a risk (through intentional or unintentional actions) of inappropriate, offensive, or toxic content being produced and shared. This can lead to violation of company policy and irreparable brand reputation damage. Implementing guardrails while utilizing AI to innovate faster within this industry can provide long lasting benefits.

In this post, we cover the use of the multimodal toxicity detection feature of Amazon Bedrock Guardrails to guard against toxic content. Whether you’re an enterprise giant in the fashion industry or an up-and-coming brand, you can use this solution to screen potentially harmful content before it impacts your brand’s reputation and ethical standards. For the purposes of this post, ethical standards refer to toxic, disrespectful, or harmful content and images that could be created by fashion designers.

Brand reputation represents a priceless currency that transcends trends, with companies competing not just for sales but for consumer trust and loyalty. As technology evolves, the need for effective reputation management strategies should include using AI in responsible ways. In this growing age of innovation, as the fashion industry evolves and creatives innovate faster, brands that strategically manage their reputation while adapting to changing consumer preferences and global trends will distinguish themselves from the rest in the industry (source). Take the first step toward responsible AI within your creative practices with Amazon Bedrock Guardrails.

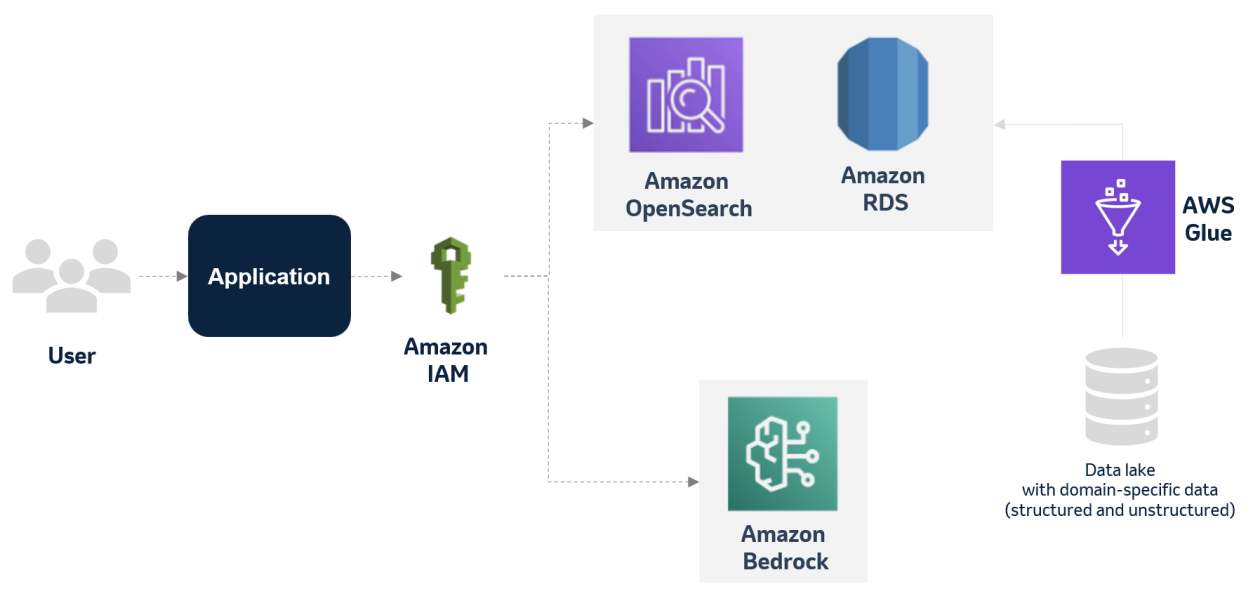

Solution overview

To incorporate multimodal toxicity detection guardrails in an image generating workflow with Amazon Bedrock, you can use the following AWS services:

- Amazon Simple Storage Service (Amazon S3) to store fashion images

- Amazon S3 Event Notifications to trigger workflow processing when new images are uploaded

- AWS Lambda to process images

- Amazon Bedrock Guardrails to analyze content

The following diagram illustrates the solution architecture.

Prerequisites

For this solution, you must have the following:

- An AWS account

- AWS Identity and Access Management (IAM) Lambda execution role

The following IAM policy grants specific permissions for a Lambda function to interact with Amazon CloudWatch Logs, access objects in an S3 bucket, and apply Amazon Bedrock guardrails, enabling the function to log its activities, read from Amazon S3, and use Amazon Bedrock content filtering capabilities. Before using this policy, update the placeholders with your resource-specific values:

Create a multimodal guardrail in Amazon Bedrock

The foundation of our moderation system is a guardrail in Amazon Bedrock configured specifically for image content. To create a multimodality toxicity detection guardrail, complete the following steps:

- On the Amazon Bedrock console, choose Guardrails under Safeguards in the navigation pane.

- Choose Create guardrail.

- Enter a name and optional description, and create your guardrail.

Configure content filters for multiple modalities

Next, you configure the content filters. Complete the following steps:

- On the Configure content filters page, choose Image under Filter for prompts. This allows the guardrail to process visual content alongside text.

- Configure the categories for Hate, Insults, Sexual, and Violence to filter both text and image content. The Misconduct and Prompt threat categories are available for text content filtering only.

- Create your filters.

By setting up these filters, you create a comprehensive safeguard that can detect potentially harmful content across multiple modalities, enhancing the safety and reliability of your AI applications.

Create an S3 bucket

You need a place for users (or other processes) to upload the images that require moderation. To create an S3 bucket, complete the following steps:

- On the Amazon S3 console, choose Buckets in the navigation pane.

- Choose Create bucket.

- Enter a unique name and choose the AWS Region where you want to host the bucket.

- For this basic setup, standard settings are usually sufficient.

- Create your bucket.

This bucket is where our workflow begins—new images landing here will trigger the next step.

Create a Lambda function

We use a Lambda function, a serverless compute service, written in Python. This function is invoked when a new image arrives in the S3 bucket. The function will send the image to our guardrail in Amazon Bedrock for analysis. Complete the following steps to create your function:

- On the Lambda console, choose Functions in the navigation pane.

- Choose Create function.

- Enter a name and choose a recent Python runtime.

- Grant the correct permissions using the IAM execution role. The function needs permission to read the newly uploaded object from your S3 bucket (

s3:GetObject) and permission to interact with Amazon Bedrock Guardrails using thebedrock:ApplyGuardrailaction for your specific guardrail. - Create your guardrail.

Let’s explore the Python code that powers this function. We use the AWS SDK for Python (Boto3) to interact with Amazon S3 and Amazon Bedrock. The code first identifies the uploaded image from the S3 event trigger. It then checks if the image format is supported (JPEG or PNG) and verifies that the size doesn’t exceed the guardrail limit of 4 MB.

The key step involves preparing the image data for the ApplyGuardrail API call. We package the raw image bytes along with its format into a structure that Amazon Bedrock understands. We use the ApplyGuardrail API; this is efficient because we can check the image against our configured policies without needing to invoke a full foundation model.

Finally, the function calls ApplyGuardrail, passing the image content, the guardrail ID, and the version you noted earlier. It then interprets the response from Amazon Bedrock, logging whether the content was BLOCKED or NONE (meaning it passed the check), along with specific harmful categories detected if it was blocked.

The following is Python code you can use as a starting point (remember to replace the placeholders):

Create an Amazon S3 trigger for the Lambda function

With the S3 bucket ready and the function coded, you must now connect them. This is done by setting up an Amazon S3 trigger on the Lambda function:

- On the function’s configuration page, choose Add trigger.

- Choose S3 as the source.

- Point it to the S3 bucket you created earlier.

- Configure the trigger to activate on All object create events. This makes sure that whenever a new file is successfully uploaded to the S3 bucket, your Lambda function is automatically invoked.

Test your moderation pipeline

It’s time to see your automated workflow in action! Upload a few test images (JPEG or PNG, under 4 MB) to your designated S3 bucket. Include images that are clearly safe and others that might trigger the harmful content filters you configured in your guardrail. On the CloudWatch console, find the log group associated with your Lambda function. Examining the latest log streams will show you the function’s execution details. You should see messages confirming which file was processed, the call to ApplyGuardrail, and the final guardrail action (NONE or BLOCKED). If an image was blocked, the logs should also show the specific assessment details, indicating which harmful category was detected.

By following these steps, you have established a robust, serverless pipeline for automatically moderating image content using the power of Amazon Bedrock Guardrails. This proactive approach helps maintain safer online environments and aligns with responsible AI practices.

Clean up

When you’re ready to remove the moderation pipeline you built, you must clean up the resources you created to avoid unnecessary charges. Complete the following steps:

- On the Amazon S3 console, remove the event notification configuration in the bucket that triggers the Lambda function.

- Delete the bucket.

- On the Lambda console, delete the moderation function you created.

- On the IAM console, remove the execution role you created for the Lambda function.

- If you created a guardrail specifically for this project and don’t need it for other purposes, remove it using the Amazon Bedrock console.

With these cleanup steps complete, you have successfully removed the components of your image moderation pipeline. You can recreate this solution in the future by following the steps outlined in this post—this highlights the ease of cloud-based, serverless architectures.

Conclusion

In the fashion industry, protecting your brand’s reputation while maintaining creative innovation is paramount. By implementing Amazon Bedrock Guardrails multimodal toxicity detection, fashion brands can automatically screen content for potentially harmful material before it impacts their reputation or violates their ethical standards. As the fashion industry continues to evolve digitally, implementing robust content moderation systems isn’t just about risk management—it’s about building trust with your customers and maintaining brand integrity. Whether you’re an established fashion house or an emerging brand, this solution offers an efficient way to uphold your content standards. The solution we outlined in this post provides a scalable, serverless architecture that accomplishes the following:

- Automatically processes new image uploads

- Uses advanced AI capabilities through Amazon Bedrock

- Provides immediate feedback on content acceptability

- Requires minimal maintenance after it’s deployed

If you’re interested in further insights on Amazon Bedrock Guardrails and its practical use, refer to the video Amazon Bedrock Guardrails: Make Your AI Safe and Ethical, and the post Amazon Bedrock Guardrails image content filters provide industry-leading safeguards, helping customer block up to 88% of harmful multimodal content: Generally available today.

About the Authors

Jordan Jones is a Solutions Architect at AWS within the Cloud Sales Center organization. He uses cloud technologies to solve complex problems, bringing defense industry experience and expertise in various operating systems, cybersecurity, and cloud architecture. He enjoys mentoring aspiring professionals and speaking on various career panels. Outside of work, he volunteers within the community and can be found watching Golden State Warriors games, solving Sudoku puzzles, or exploring new cultures through world travel.

Jordan Jones is a Solutions Architect at AWS within the Cloud Sales Center organization. He uses cloud technologies to solve complex problems, bringing defense industry experience and expertise in various operating systems, cybersecurity, and cloud architecture. He enjoys mentoring aspiring professionals and speaking on various career panels. Outside of work, he volunteers within the community and can be found watching Golden State Warriors games, solving Sudoku puzzles, or exploring new cultures through world travel.

Jean Jacques Mikem is a Solutions Architect at AWS with a passion for designing secure and scalable technology solutions. He uses his expertise in cybersecurity and technological hardware to architect robust systems that meet complex business needs. With a strong foundation in security principles and computing infrastructure, he excels at creating solutions that bridge business requirements with technical implementation.

Jean Jacques Mikem is a Solutions Architect at AWS with a passion for designing secure and scalable technology solutions. He uses his expertise in cybersecurity and technological hardware to architect robust systems that meet complex business needs. With a strong foundation in security principles and computing infrastructure, he excels at creating solutions that bridge business requirements with technical implementation.

![[PRO Tips] Use the BCG matrix to help you analyze the current situation, product positioning, and formulate strategies](https://i.scdn.co/image/ab6765630000ba8a165b48c48c4321b36a1df7b9?#)

![[Business Talk] BYD's Hiring Standards: A Reflection of China's Competitive Job Market](https://i.scdn.co/image/ab6765630000ba8a1a1e0af3aefae3a685793e7c?#)

![[PRO Tips] What is ESG? How is it different from CSR and SDGs? 3 keywords that companies and investors should know](https://i.scdn.co/image/ab6765630000ba8a76dbe129993a62e85226c2b4?#)

![[Business Talk] Elon Musk](https://i.scdn.co/image/ab6765630000ba8ac91eb094519def31d2b67898?#)