How London Stock Exchange Group is detecting market abuse with their AI-powered Surveillance Guide on Amazon Bedrock

In this post, we explore how London Stock Exchange Group (LSEG) used Amazon Bedrock and Anthropic's Claude foundation models to build an automated system that significantly improves the efficiency and accuracy of market surveillance operations.

London Stock Exchange Group (LSEG) is a global provider of financial markets data and infrastructure. It operates the London Stock Exchange and manages international equity, fixed income, and derivative markets. The group also develops capital markets software, offers real-time and reference data products, and provides extensive post-trade services. This post was co-authored with Charles Kellaway and Rasika Withanawasam of LSEG.

Financial markets are remarkably complex, hosting increasingly dynamic investment strategies across new asset classes and interconnected venues. Accordingly, regulators place great emphasis on the ability of market surveillance teams to keep pace with evolving risk profiles. However, the landscape is vast; London Stock Exchange alone facilitates the trading and reporting of over £1 trillion of securities by 400 members annually. Effective monitoring must cover all MiFID asset classes, markets and jurisdictions to detect market abuse, while also giving weight to participant relationships, and market surveillance systems must scale with volumes and volatility. As a result, many systems are outdated and unsatisfactory for regulatory expectations, requiring manual and time-consuming work.

To address these challenges, London Stock Exchange Group (LSEG) has developed an innovative solution using Amazon Bedrock, a fully managed service that offers a choice of high-performing foundation models from leading AI companies, to automate and enhance their market surveillance capabilities. LSEG’s AI-powered Surveillance Guide helps analysts efficiently review trades flagged for potential market abuse by automatically analyzing news sensitivity and its impact on market behavior.

In this post, we explore how LSEG used Amazon Bedrock and Anthropic’s Claude foundation models to build an automated system that significantly improves the efficiency and accuracy of market surveillance operations.

The challenge

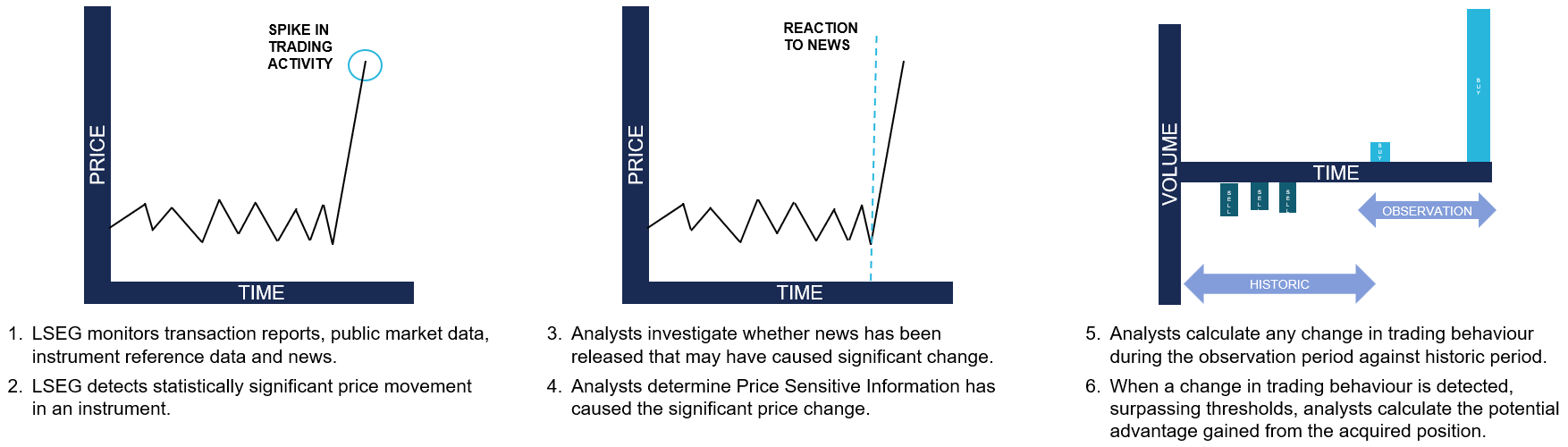

Currently, LSEG’s surveillance monitoring systems generate automated, customized alerts to flag suspicious trading activity to the Market Supervision team. Analysts then conduct initial triage assessments to determine whether the activity warrants further investigation, which might require undertaking differing levels of qualitative analysis. This could involve manual collation of all and any evidence that might be applicable when methodically corroborating regulation, news, sentiment and trading activity. For example, during an insider dealing investigation, analysts are alerted to statistically significant price movements. The analyst must then conduct an initial assessment of related news during the observation period to determine if the highlighted price move has been caused by specific news and its likely price sensitivity, as shown in the following figure. This initial step in assessing the presence, or absence, of price sensitive news guides the subsequent actions an analyst will take with a possible case of market abuse.

Initial triaging can be a time-consuming and resource-intensive process and still necessitate a full investigation if the identified behavior remains potentially suspicious or abusive.

Moreover, the dynamic nature of financial markets and evolving tactics and sophistication of bad actors demand that market facilitators revisit automated rules-based surveillance systems. The increasing frequency of alerts and high number of false positives adversely impact an analyst’s ability to devote quality time to the most meaningful cases, and such heightened emphasis on resources could result in operational delays.

Solution overview

To address these challenges, LSEG collaborated with AWS to improve insider dealing detection, developing a generative AI prototype that automatically predicts the probability of news articles being price sensitive. The system employs Anthropic’s Claude Sonnet 3.5 model—the most price performant model at the time—through Amazon Bedrock to analyze news content from LSEG’s Regulatory News Service (RNS) and classify articles based on their potential market impact. The results support analysts to more quickly determine whether highlighted trading activity can be mitigated during the observation period.

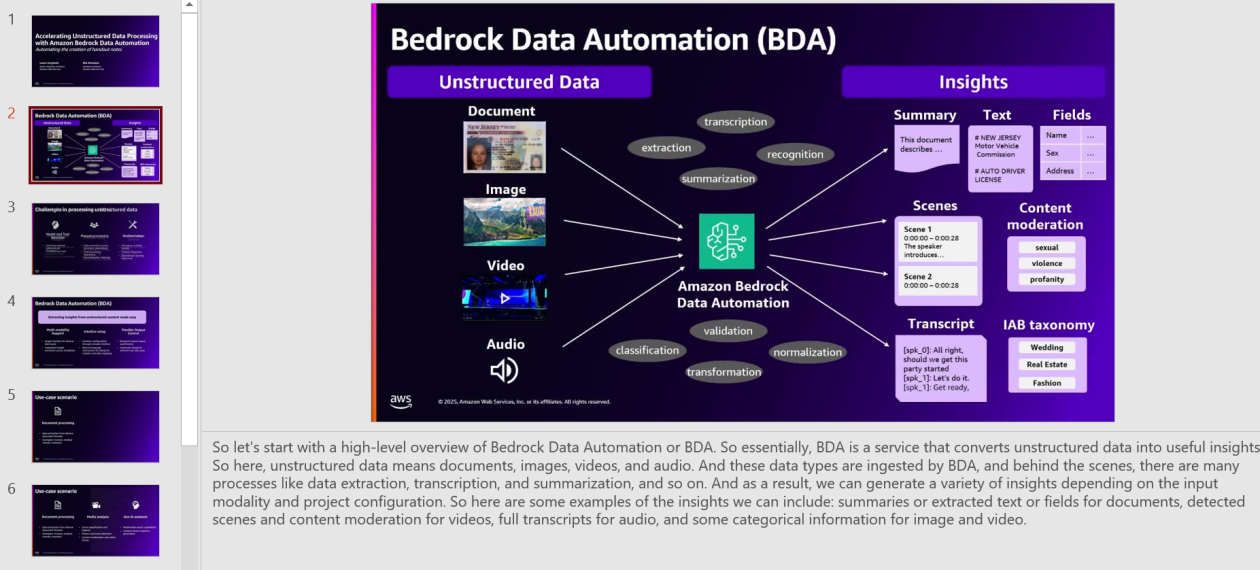

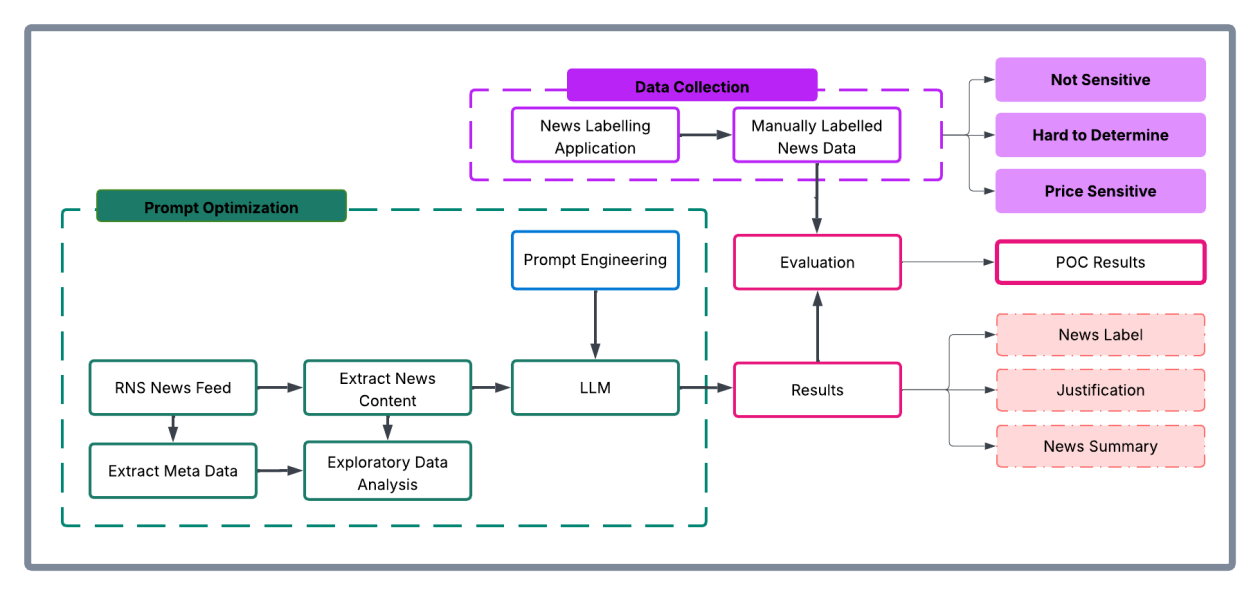

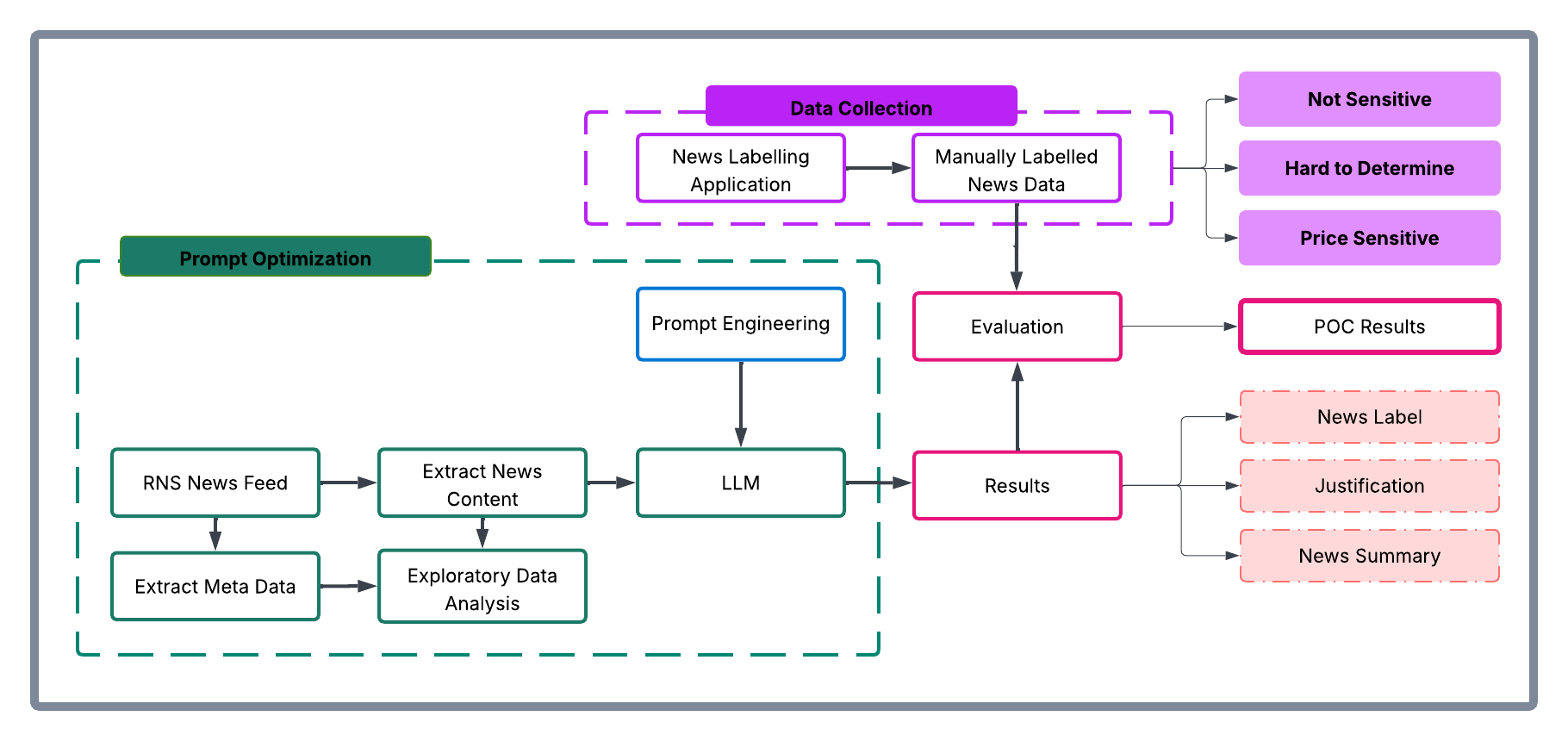

The architecture consists of three main components:

- A data ingestion and preprocessing pipeline for RNS articles

- Amazon Bedrock integration for news analysis using Claude Sonnet 3.5

- Inference application for visualising results and predictions

The following diagram illustrates the conceptual approach:

The workflow processes news articles through the following steps:

- Ingest raw RNS news documents in HTML format

- Preprocess and extract clean news text

- Fill the classification prompt template with text from the news documents

- Prompt Anthropic’s Claude Sonnet 3.5 through Amazon Bedrock

- Receive and process model predictions and justifications

- Present results through the visualization interface developed using Streamlit

Methodology

The team collated a comprehensive dataset of approximately 250,000 RNS articles spanning 6 consecutive months of trading activity in 2023. The raw data—HTML documents from RNS—were initially pre-processed within the AWS environment by removing extraneous HTML elements and formatted to extract clean textual content. Having isolated substantive news content, the team subsequently carried out exploratory data analysis to understand distribution patterns within the RNS corpus, focused on three dimensions:

- News categories: Distribution of articles across different regulatory categories

- Instruments: Financial instruments referenced in the news articles

- Article length: Statistical distribution of document sizes

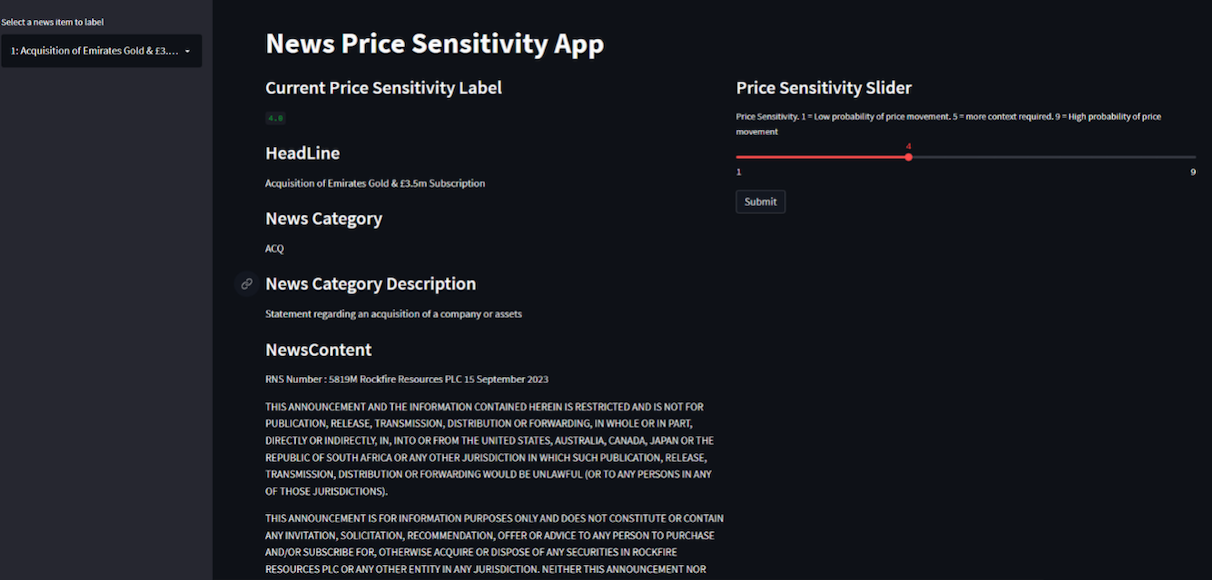

Exploration provided contextual understanding of the news landscape and informed the sampling strategy in creating a representative evaluation dataset. 110 articles were selected to cover major news categories, and this curated subset was presented to market surveillance analysts who, as domain experts, evaluated each article’s price sensitivity on a nine-point scale, as shown in the following image:

- 1–3: PRICE_NOT_SENSITIVE – Low probability of price sensitivity

- 4–6: HARD_TO_DETERMINE – Uncertain price sensitivity

- 7–9: PRICE_SENSITIVE – High probability of price sensitivity

The experiment was executed within Amazon SageMaker using Jupyter Notebooks as the development environment. The technical stack consisted of:

- Instructor library: Provided integration capabilities with Anthropic’s Claude Sonnet 3.5 model in Amazon Bedrock

- Amazon Bedrock: Served as the API infrastructure for model access

- Custom data processing pipelines (Python): For data ingestion and preprocessing

This infrastructure enabled systematic experimentation with various algorithmic approaches, including traditional supervised learning methods, prompt engineering with foundation models, and fine-tuning scenarios.

The evaluation framework established specific technical success metrics:

- Data pipeline implementation: Successful ingestion and preprocessing of RNS data

- Metric definition: Clear articulation of precision, recall, and F1 metrics

- Workflow completion: Execution of comprehensive exploratory data analysis (EDA) and experimental workflows

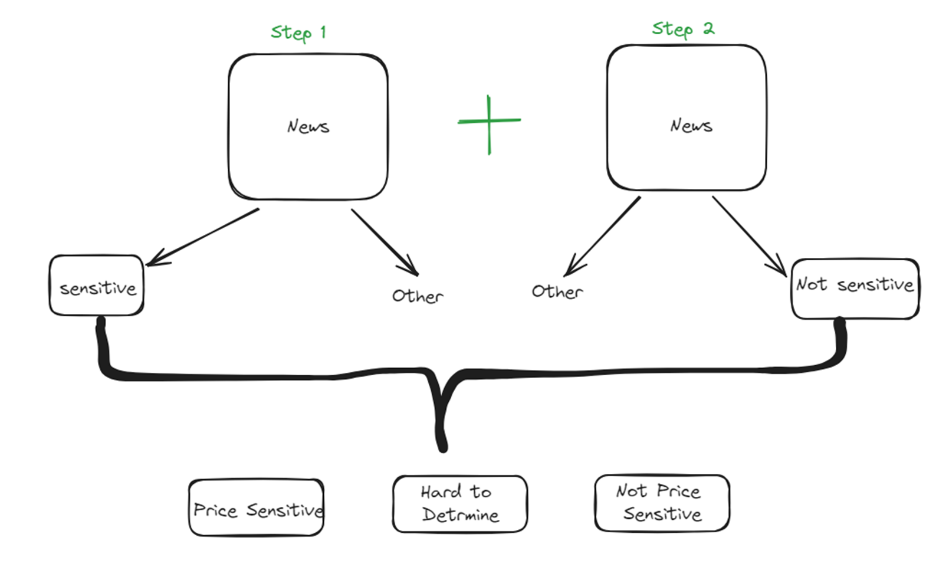

The analytical approach was a two-step classification process, as shown in the following figure:

- Step 1: Classify news articles as potentially price sensitive or other

- Step 2: Classify news articles as potentially price not sensitive or other

This multi-stage architecture was designed to maximize classification accuracy by allowing analysts to focus on specific aspects of price sensitivity at each stage. The results from each step were then merged to produce the final output, which was compared with the human-labeled dataset to generate quantitative results.

To consolidate the results from both classification steps, the data merging rules followed were:

| Step 1 Classification | Step 2 Classification | Final Classification |

|---|---|---|

| Sensitive | Other | Sensitive |

| Other | Non-sensitive | Non-sensitive |

| Other | Other | Ambiguous – requires manual review i.e., Hard to Determine |

| Sensitive | Non-sensitive | Ambiguous – requires manual review i.e., Hard to Determine |

Based on the insights gathered, prompts were optimized. The prompt templates elicited three key components from the model:

- A concise summary of the news article

- A price sensitivity classification

- A chain-of-thought explanation justifying the classification decision

The following is an example prompt:

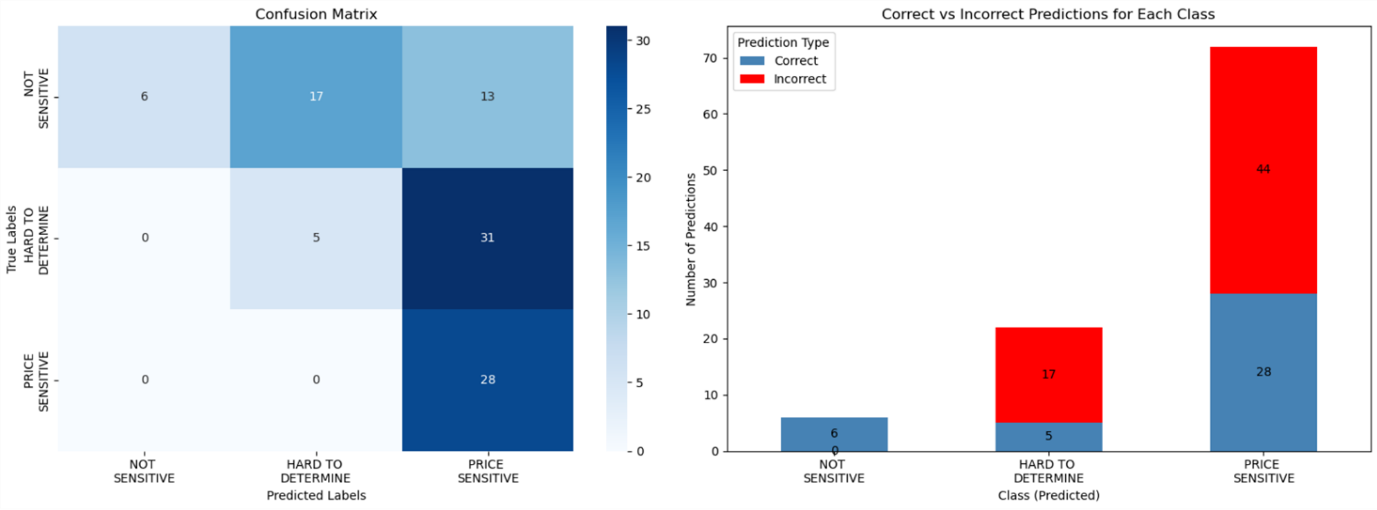

As shown in the following figure, the solution was optimized to maximize:

- Precision for the

NOT SENSITIVEclass - Recall for the

PRICE SENSITIVEclass

This optimization strategy was deliberate, facilitating high confidence in non-sensitive classifications to reduce unnecessary escalations to human analysts (in other words, to reduce false positives). Through this methodical approach, prompts were iteratively refined while maintaining rigorous evaluation standards through comparison against the expert-annotated baseline data.

Key benefits and results

Over a 6-week period, Surveillance Guide demonstrated remarkable accuracy when evaluated on a representative sample dataset. Key achievements include the following:

- 100% precision in identifying non-sensitive news, allocating 6 articles to this category that analysts confirmed were non price sensitive

- 100% recall in detecting price-sensitive content, allocating 36 hard to determine and 28 price sensitive articles labelled by analysts into one of these two categories (never misclassifying price sensitive content)

- Automated analysis of complex financial news

- Detailed justifications for classification decisions

- Effective triaging of results by sensitivity level

In this implementation, LSEG has employed Amazon Bedrock so that they can use secure, scalable access to foundation models through a unified API, minimizing the need for direct model management and reducing operational complexity. Because of the serverless architecture of Amazon Bedrock, LSEG can take advantage of dynamic scaling of model inference capacity based on news volume, while maintaining consistent performance during market-critical periods. Its built-in monitoring and governance features support reliable model performance and maintain audit trails for regulatory compliance.

Impact on market surveillance

This AI-powered solution transforms market surveillance operations by:

- Reducing manual review time for analysts

- Improving consistency in price-sensitivity assessment

- Providing detailed audit trails through automated justifications

- Enabling faster response to potential market abuse cases

- Scaling surveillance capabilities without proportional resource increases

The system’s ability to process news articles instantly and provide detailed justifications helps analysts focus their attention on the most critical cases while maintaining comprehensive market oversight.

Proposed next steps

LSEG plans to first enhance the solution, for internal use, by:

- Integrating additional data sources, including company financials and market data

- Implementing few-shot prompting and fine-tuning capabilities

- Expanding the evaluation dataset for continued accuracy improvements

- Deploying in live environments alongside manual processes for validation

- Adapting to additional market abuse typologies

Conclusion

LSEG’s Surveillance Guide demonstrates how generative AI can transform market surveillance operations. Powered by Amazon Bedrock, the solution improves efficiency and enhances the quality and consistency of market abuse detection.

As financial markets continue to evolve, AI-powered solutions architected along similar lines will become increasingly important for maintaining integrity and compliance. AWS and LSEG are intent on being at the forefront of this change.

The selection of Amazon Bedrock as the foundation model service provides LSEG with the flexibility to iterate on their solution while maintaining enterprise-grade security and scalability. To learn more about building similar solutions with Amazon Bedrock, visit the Amazon Bedrock documentation or explore other financial services use cases in the AWS Financial Services Blog.

About the authors

Charles Kellaway is a Senior Manager in the Equities Trading team at LSE plc, based in London. With a background spanning both Equity and Insurance markets, Charles specialises in deep market research and business strategy, with a focus on deploying technology to unlock liquidity and drive operational efficiency. His work bridges the gap between finance and engineering, and he always brings a cross-functional perspective to solving complex challenges.

Charles Kellaway is a Senior Manager in the Equities Trading team at LSE plc, based in London. With a background spanning both Equity and Insurance markets, Charles specialises in deep market research and business strategy, with a focus on deploying technology to unlock liquidity and drive operational efficiency. His work bridges the gap between finance and engineering, and he always brings a cross-functional perspective to solving complex challenges.

Rasika Withanawasam is a seasoned technology leader with over two decades of experience architecting and developing mission-critical, scalable, low-latency software solutions. Rasika’s core expertise lies in big data and machine learning applications, focusing intently on FinTech and RegTech sectors. He has held several pivotal roles at LSEG, including Chief Product Architect for the flagship Millennium Surveillance and Millennium Analytics platforms, and currently serves as Manager of the Quantitative Surveillance & Technology team, where he leads AI/ML solution development.

Rasika Withanawasam is a seasoned technology leader with over two decades of experience architecting and developing mission-critical, scalable, low-latency software solutions. Rasika’s core expertise lies in big data and machine learning applications, focusing intently on FinTech and RegTech sectors. He has held several pivotal roles at LSEG, including Chief Product Architect for the flagship Millennium Surveillance and Millennium Analytics platforms, and currently serves as Manager of the Quantitative Surveillance & Technology team, where he leads AI/ML solution development.

Richard Chester is a Principal Solutions Architect at AWS, advising large Financial Services organisations. He has 25+ years’ experience across the Financial Services Industry where he has held leadership roles in transformation programs, DevOps engineering, and Development Tooling. Since moving across to AWS from being a customer, Richard is now focused on driving the execution of strategic initiatives, mitigating risks and tackling complex technical challenges for AWS customers.

Richard Chester is a Principal Solutions Architect at AWS, advising large Financial Services organisations. He has 25+ years’ experience across the Financial Services Industry where he has held leadership roles in transformation programs, DevOps engineering, and Development Tooling. Since moving across to AWS from being a customer, Richard is now focused on driving the execution of strategic initiatives, mitigating risks and tackling complex technical challenges for AWS customers.