Introducing V-RAG: revolutionizing AI-powered video production with Retrieval Augmented Generation

This post introduces Video Retrieval-Augmented Generation (V-RAG), an approach to help improve video content creation. By combining retrieval augmented generation with advanced video AI models, V-RAG offers an efficient, and reliable solution for generating AI videos.

A key development in generative AI is AI-powered video generation. Before AI, creating dynamic video content required extensive resources, technical expertise, and significant manual effort. Today, AI models can generate videos from simple inputs, but organizations still face challenges like unpredictable results. This post introduces Video Retrieval-Augmented Generation (V-RAG), an approach to help improve video content creation. By combining retrieval augmented generation with advanced video AI models, V-RAG offers an efficient, and reliable solution for generating AI videos.

Video generation

AI video generation represents a transformative frontier in digital content creation, enabling the automated production of dynamic visual narratives without traditional filming or animation processes. By using deep learning architectures, these systems can synthesize realistic or stylized video sequences. Unlike conventional video production that requires cameras, actors, and extensive post-production, AI generation creates content entirely through computational processes analyzing patterns from massive training datasets to render coherent visual stories. Individuals and organizations can use this technology to produce visual content with minimal technical expertise, reducing the time, resources, and specialized skills traditionally required. As these models continue to evolve, they promise to fundamentally reshape how visual stories are conceived, produced, and shared across industries ranging from entertainment and marketing to education and communication.

Text-to-video generation

Text-to-video generation creates dynamic video content from narrative or thematic text prompts. This technology interprets textual descriptions and transforms them into coherent visual sequences that follow the specified narrative. While text prompts effectively guide the overall theme and storyline, they can sometimes fall short in capturing highly specific visual details with precision. Text-to-video serves as the foundation of AI video creation, where users can generate content based on descriptive language alone.

Video generation customization

Text prompting can only get you so far with video generation. There’s inherently limited control when relying solely on text descriptions, as models can ignore crucial parts of your prompt or interpret them differently than you intended. Certain visual concepts prove difficult to explain in words alone, additionally, you’re constrained by the model’s token limit that caps how detailed your instructions can be. This is where further customization becomes invaluable. Users can use robust customization tools to specify numerous parameters beyond what text can efficiently communicate, such as style, mood, and intricate visual aesthetics. These controls help overcome the limitations of text prompting by providing direct mechanisms to influence the output. Without such capabilities, creators are left hoping the model correctly interprets their intentions rather than actively directing the creative process. Customization bridges the gap between vague generation and precise visual control, making AI video tools truly useful for professional applications.

Model fine-tuning

Fine-tuning adapts pre-trained video generation models to specific domains, styles, or use cases. This process allows organizations to create specialized video generators that excel at tasks whether they’re producing product demonstrations with consistent branding, generating medical educational content, or creating videos in a distinctive artistic style. Fine-tuning typically involves further training of existing models on carefully curated datasets representing the target domain, allowing the model to learn the unique visual patterns, movements, and stylistic elements required for specialized applications. However, fine-tuning video generation models presents significant challenges. The fundamental obstacle begins with data acquisition because high-quality video data that’s suitable for training is both expensive and difficult to obtain. Organizations need diverse, well-labeled footage in a specific format covering specific use cases while meeting technical quality standards. The computational demands are substantial, representing a major barrier to entry. A single fine-tuning run can require multiple high-end GPUs operating continuously, and retraining to incorporate new capabilities multiplies these costs with each iteration. Even with perfect data and unlimited computational resources, success remains uncertain due to the interconnected nature of video elements like coherence, physical accuracy, lighting consistency, and object persistence. Improvements in one area often led to unexpected degradation in others, creating complex optimization challenges resistant to simple solutions.

Image-to-video

Image-to-video generation complements text-based approaches by offering additional visual control. By using an input image as a reference, users can ensure specific details such as the color, style, and other attributes of objects are accurately represented in the generated video. For example, if a user wants to feature a red purse in their video, providing an image of that exact purse guarantees visual fidelity that text descriptions alone might not achieve. This technique maintains consistency and improves prompt adherence through conditioning, while enabling dynamic movement and integration within the broader narrative context. Image-to-video generation doesn’t require any fine-tuning.

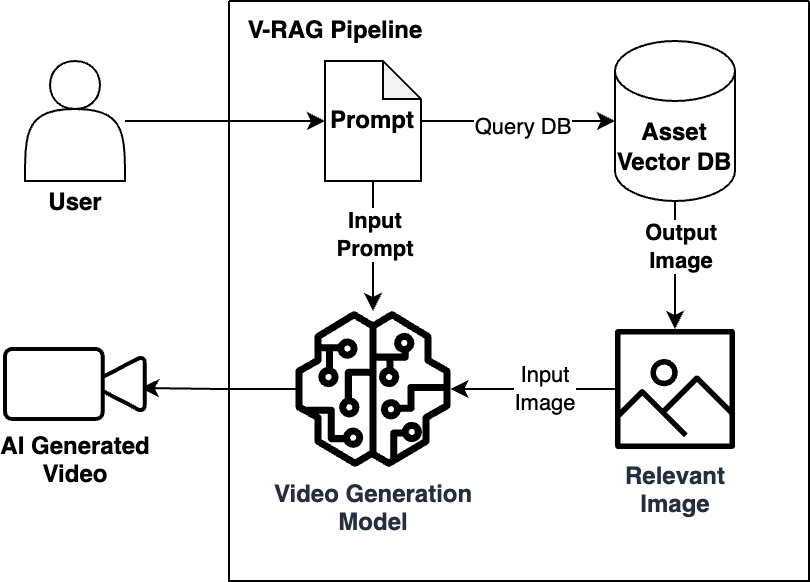

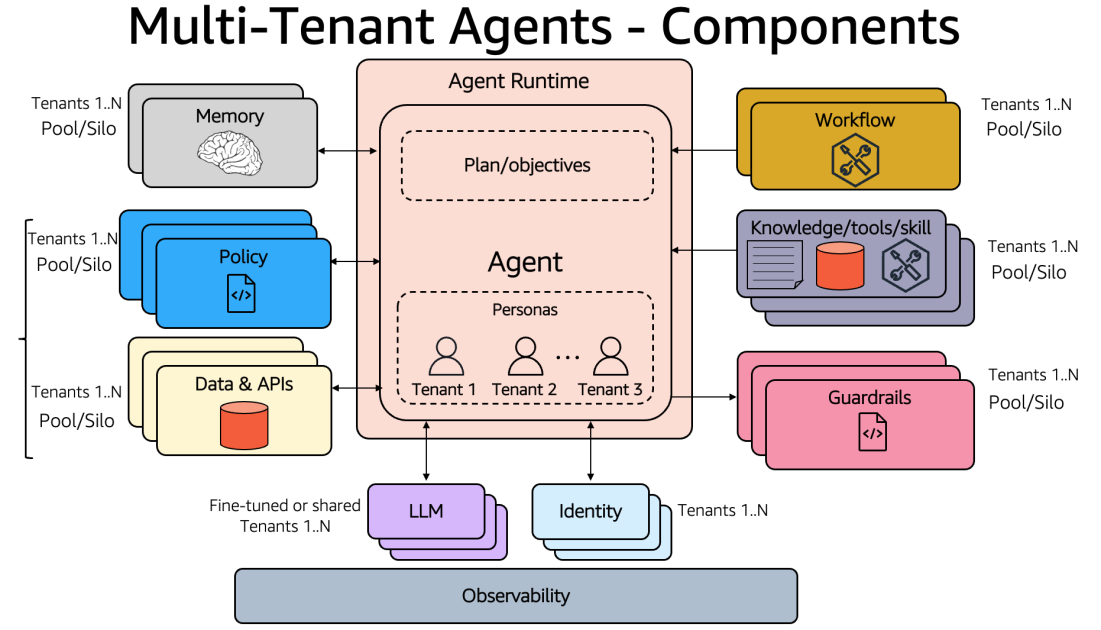

V-RAG: an effective approach in video generation customization

Video Retrieval-Augmented Generation (V-RAG) builds upon image-to-video technology to expand video customization capabilities. While traditional image-to-video converts a single reference image into motion, V-RAG expands this capability by retrieving and incorporating a relevant image from a database to feed into a video generation. This approach offers several capabilities without requiring any model training or retraining. Organizations can ingest their image collections into a vector database, query it, and feed its output to an existing video generation model and start producing tailored content immediately.

V-RAG’s efficiency comes from requiring only static images, which are generally more readily available than video training data. These images can be added to the vector database on the fly, making them instantly available for the next generation task without computational delays. Every video generated through this process maintains clear traceability to its source images, creating an auditable trail that enhances verification and debugging capabilities. The system grounds video outputs in specific reference imagery, which is designed to help reduce hallucination risks and manage computational costs. Organizations can maintain separate visual knowledge bases for different departments or use cases, streamlining compliance as all source materials can be thoroughly vetted before entering the system.

Logical Diagram of V-RAG

The evolving nature of V-RAG

V-RAG represents not a fixed technology, but an evolving framework that will continuously expand as AI capabilities advance. While current implementations primarily utilize image databases, the fundamental retrieval augmentation approach is modality-agnostic. As multimodal AI models mature, V-RAG systems will naturally incorporate audio samples, video snippets, and 3D models as reference points during generation. Future iterations will likely support synthesizing complete audio-visual experiences, generating videos with perfectly synchronized speech, realistic environmental sounds, and custom musical scores based on retrieved audio patterns. This flexibility positions V-RAG as a foundational paradigm rather than a specific implementation, allowing it to adapt alongside broader AI advancements while maintaining its core benefits of traceability, efficiency, and reduced hallucination. The ultimate vision extends beyond even audiovisual content to potentially incorporating interactive elements, creating a comprehensive multimodal generation system that can produce engaging outputs while maintaining grounding in reliable reference material.

Key benefits of V-RAG

Generating videos using images retrieved through V-RAG offers significant benefits like increased accuracy, relevance, and contextual understanding. This approach grounds generated content in a specific knowledge base to help guide video creation. This reduces hallucination and ensures that the video aligns with information from the image source, making it particularly useful for educational, documentary, or explainer video formats. Key benefits of using V-RAG from images include:

- Factual accuracy – Ensuring the generated video content is grounded in real information, reducing the likelihood of inaccurate or misleading visuals.

- Contextual relevance – Retrieving images that are highly relevant to the given topic or query, leading to a more cohesive and focused video narrative.

- Dynamic content generation – Allowing for flexible video creation by dynamically selecting and assembling images based on user input or changing requirements.

- Reduced development time – Using a pre-existing knowledge base to cut down on the time needed to gather and curate visual assets for video creation.

- Personalized content – Tailoring videos to individual user needs, generating content designed to be relevant and engaging.

- Scalability – Designed to scale by ingesting additional images into the vector database.

Real-world applications of V-RAG

Real-world applications of V-RAG are vast and varied. In education, V-RAG can automatically create instructional videos by pulling relevant images from a subject matter knowledge base. For personalized content, V-RAG can tailor video content to individual users by retrieving images based on their specific interests. For marketing, V-RAG can create targeted video ads by pulling images that align with specific demographics or product features.

Conclusion

As AI technology continues to evolve, V-RAG’s flexible framework positions it to incorporate new modalities and capabilities, from advanced audio integration to interactive elements. The AWS implementation demonstrates how organizations can already begin using this technology through existing cloud services, making AI video generation accessible to a broader range of users. Looking ahead, V-RAG’s impact on video content creation will likely extend far beyond its current applications in education, and marketing. As the technology matures, it has the potential to make video production accessible while supporting quality, accuracy, and customization. This approach offers a promising path for AI-powered video generation, enabling organizations to create compelling visual content.

References

Acknowledgement

Special thanks to Vishwa Gupta, Shuai Cao and Seif for their contribution.

![KAIZEN Locations & Map Guide – Boss, Chest, Weapon, Style [KASHIMO]](https://www.destructoid.com/wp-content/uploads/2026/02/kaizen-locations-and-map-guide.jpg)