Spring AI SDK for Amazon Bedrock AgentCore is now Generally Available

With the new Spring AI AgentCore SDK, you can build production-ready AI agents and run them on the highly scalable AgentCore Runtime. The Spring AI AgentCore SDK is an open source library that brings Amazon Bedrock AgentCore capabilities into Spring AI. In this post, we build an AI agent starting with a chat endpoint, then adding streaming responses, conversation memory, and tools for web browsing and code execution.

Agentic AI is transforming how organizations use generative AI, moving beyond prompt-response interactions to autonomous systems that can plan, execute, and complete complex multi-step tasks. While early proof of concepts in Agentic AI spaces excite business stakeholders, scaling them to production requires addressing scalability, governance, and security challenges. Amazon Bedrock AgentCore is an Agentic AI platform to build, deploy, and operate agents at scale using any framework and any model.

Java developers want to build AI agents using known Spring patterns, but production deployment requires infrastructure that’s complex to implement from scratch. Amazon Bedrock AgentCore provides building blocks like managed runtime infrastructure (scalability, reliability, security, observability), short- and long-term memory, browser automation, sandboxed code execution, and evaluations. Integrating these capabilities into a Spring application currently requires writing custom controllers to fulfill AgentCore Runtime contract, handling Server-Side Events (SSE) streaming, implementing health checks, managing rate limiting, and wiring up Spring advisors, memory repositories, and tool definitions. This is weeks of infrastructure work before writing any AI Agent logic.

With the new Spring AI AgentCore SDK, you can build production-ready AI agents and run them on the highly scalable AgentCore Runtime. The Spring AI AgentCore SDK is an open source library that brings Amazon Bedrock AgentCore capabilities into Spring AI through known patterns: annotations, auto-configuration, and composable advisors. SpringAI Builders add a dependency, annotate a method, and the SDK handles the rest.

Understanding the AgentCore Runtime contract

AgentCore Runtime manages agent lifecycle and scaling with pay-per-use pricing, meaning you don’t pay for idle compute. The runtime routes incoming requests to your agent and monitors its health, but this requires your agent to follow a contract. The contract requires that the implementation exposes two endpoints. The /invocations endpoint receives requests and returns responses as either JSON or SSE streaming. The /ping health endpoint reports a Healthy or HealthyBusy status. Long-running tasks must signal that they’re busy, or the runtime might scale them down to save costs. The SDK implements this contract automatically, including async task detection that reports busy status when your agent is processing.

Beyond the contract, the SDK provides additional capabilities for production workloads like handling SSE responses with proper framing, backpressure handling, and connection lifecycle management for large responses. It also provides rate limiting, throttling requests to protect your agent from traffic spikes and limit per-user consumption. You focus is on agent logic while the SDK handles the runtime integration.Beyond the contract, the SDK provides additional capabilities for production workloads such as handling SSE responses with proper framing, backpressure handling, and connection lifecycle management for large responses. It also provides rate limiting, throttling requests to protect your agent from traffic spikes and limit per-user consumption. You focus on agent logic while the SDK handles the runtime integration.

In this post, we build a production-ready AI agent starting with a chat endpoint, then adding streaming responses, conversation memory, and tools for web browsing and code execution. By the end, you will have a fully functional agent ready to deploy to AgentCore Runtime or run standalone on your existing infrastructure.

Prerequisites

To follow along, you need:

- Java 17 or higher (Java 25 recommended)

- Spring Boot 3.5 or higher

- An AWS account

- Maven or Gradle

Solution overview

The Spring AI AgentCore SDK is built on three design principles:

- Convention over configuration – Sensible defaults align with AgentCore expectations (port 8080, endpoint paths, content-type handling) without explicit configuration.

- Annotation-driven development – A single @AgentCoreInvocation annotation transforms any Spring bean method into an AgentCore-compatible endpoint with automatic serialization, streaming detection, and response formatting.

- Deployment flexibility – The SDK supports AgentCore Runtime for fully managed deployment, but you can also use individual modules (Memory, Browser, Code Interpreter) in applications running on Amazon EKS, Amazon ECS, or any other infrastructure.

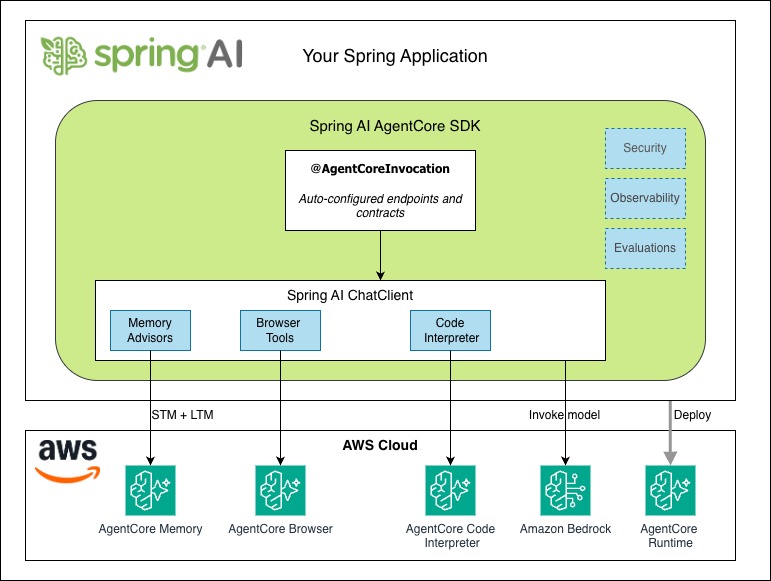

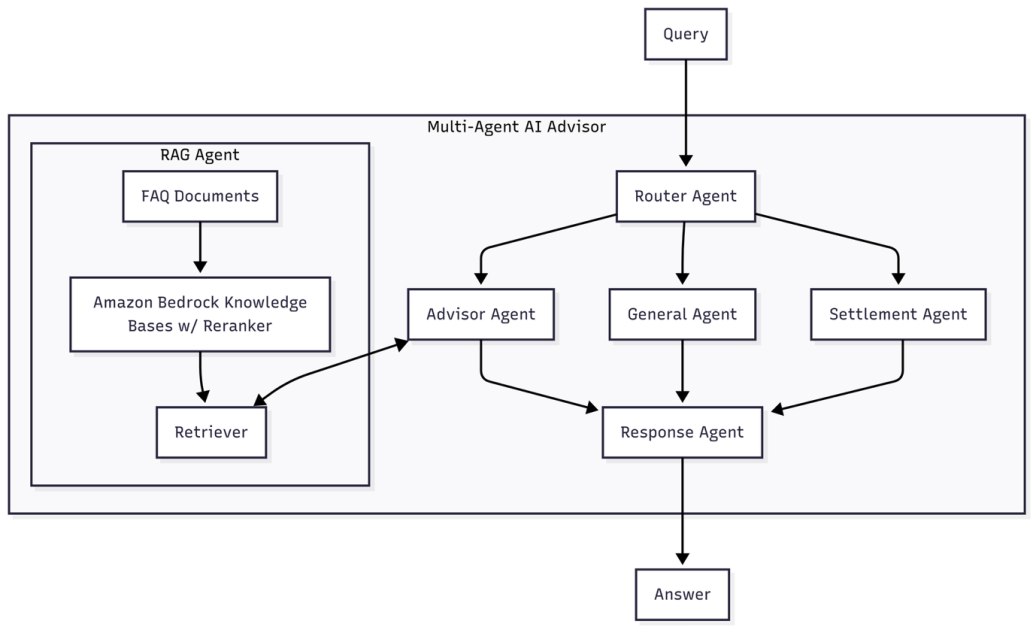

The following diagram shows how the SDK components interact. The @AgentCoreInvocation annotation handles the runtime contract, while the ChatClient composes Memory advisors, Browser tools, and Code Interpreter. Deployment to AgentCore Runtime is optional. You can use the SDK modules as standalone features.

Creating your first AI agent

The following section walks you through how to create a fully functional agent step by step:

Step 1: Add the SDK dependency

Add the Spring AI AgentCore BOM to your Maven project, then include the runtime starter:

Step 2: Create the agent

The @AgentCoreInvocation annotation tells the SDK that this method handles incoming agent requests. The SDK auto-configures POST /invocations and GET /ping endpoints, handles JSON serialization, and reports health status automatically.

Step 3: Configure Amazon Bedrock

Set your model and AWS Region in application.properties:spring.ai.bedrock.aws.region=us-east-1 spring.ai.bedrock.converse.chat.options.model=global.anthropic.claude-sonnet-4-5-20250929-v1:0

Step 4: Test locally

Start the application and send a request:

That’s a complete, AgentCore-compatible AI agent. No custom controllers, no protocol handling, no health check implementation.

Step 5: Add streaming

To stream responses as they’re generated, change the return type to Flux The SDK handles SSE framing, Content-Type headers, newline preservation, and connection lifecycle. Your code stays focused on the AI interaction.

Real-world agents must remember what users said earlier in a conversation (short-term memory) and what they’ve learned over time (long-term memory). The SDK integrates with AgentCore Memory through Spring AI’s advisor pattern, interceptors that enrich prompts with context before they reach the model.

Short-term memory (STM) keeps recent messages using a sliding window. Long-term memory (LTM) persists knowledge across sessions using four strategies:

AgentCore consolidates these strategies asynchronously, extracting relevant information without explicit developer intervention.Add the memory dependency and enable auto-discovery. In auto-discovery mode, the SDK automatically detects available long-term memory strategies and namespaces without manual configuration:

Then inject AgentCoreMemory and compose it into your chat client:

The agentCoreMemory.advisors list includes both STM and all configured LTM advisors. For detailed configuration options, see the Memory documentation.

AgentCore provides specialized tools that the SDK exposes as Spring AI tool callbacks through the ToolCallbackProvider interface.

Browser automation – Agents can navigate websites, extract content, take screenshots, and interact with page elements using AgentCore Browser:

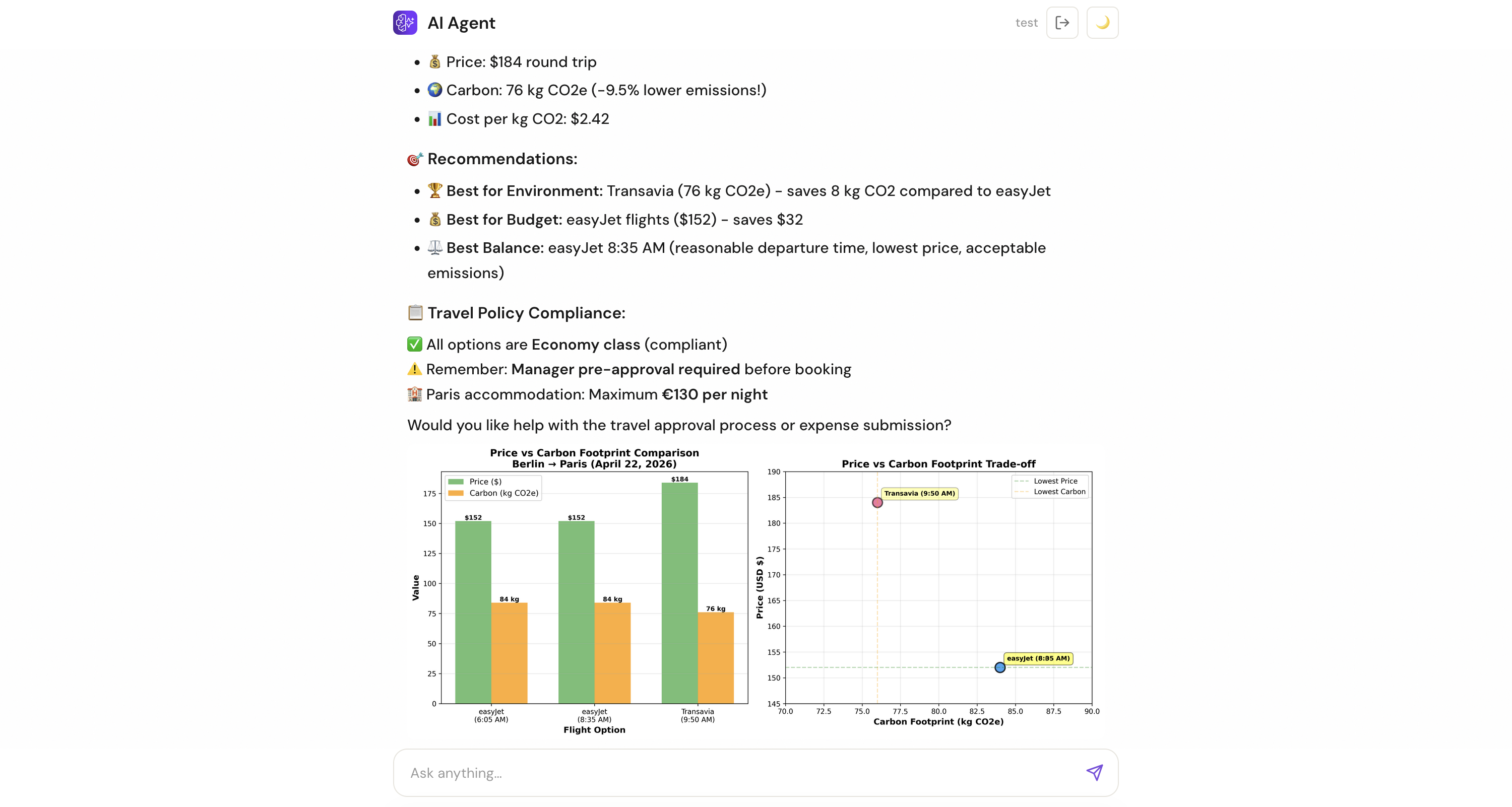

Code interpreter – Agents can write and run Python, JavaScript, or TypeScript in a secure sandbox using AgentCore Code Interpreter. The sandbox includes numpy, pandas, and matplotlib. Generated files are captured through the artifact store.

Both tools integrate through Spring AI’s The model sees all tools equally and decides which to call based on the user’s request. While this post focuses on Amazon Bedrock to access foundation models (FMs), Spring AI supports multiple large language model (LLM) providers including OpenAI and Anthropic, so you can choose the models that fit your needs. For example, a travel and expense management agent can use the browser tool to look up flight options and the code interpreter to analyze spending patterns and generate charts, all within a single conversation:

The SDK supports two deployment models:

AgentCore Runtime – For fully managed infrastructure, package your application as an ARM64 container, push it to Amazon Elastic Container Registry (Amazon ECR), and create an AgentCore Runtime that references the image. The runtime handles scaling and health monitoring. The examples/terraform directory provides infrastructure as code (IaC) with IAM and OAuth authentication options.

Standalone – Use AgentCore Memory, Browser, or Code Interpreter in applications running on Amazon Elastic Kubernetes Service (Amazon EKS), Amazon Elastic Container Service (Amazon ECS), Amazon Elastic Compute Cloud (Amazon EC2), or on-premises. With this approach, teams can adopt AgentCore capabilities incrementally. For example, adding memory to an existing Spring Boot service before migrating to AgentCore Runtime later.

AgentCore Runtime supports two authentication methods: IAM-based SigV4 for AWS service-to-service calls and OAuth2 for user-facing applications. When your Spring AI agent is deployed to AgentCore Runtime, authentication is handled at the infrastructure layer. Your application receives the authenticated user’s identity through Spring AI agents can access organizational tools through AgentCore Gateway, which provides Model Context Protocol (MCP) support with outbound authentication and a semantic tool registry. To use Gateway, configure your Spring AI MCP client endpoint to point to AgentCore Gateway and authenticate using either IAM SigV4 or OAuth2:

This enables agents to discover and invoke enterprise tools while Gateway handles credential management for downstream services. For a hands-on example, see the Building Java AI agents with Spring AI and Amazon Bedrock AgentCore workshop, which demonstrates MCP integration with AgentCore Gateway.

The SDK continues to evolve. Upcoming integrations will include:

If you created resources while following this post, delete them to avoid ongoing charges:

In this post, we showed you how to build production-ready AI agents in Java using the Spring AI AgentCore SDK. Starting from an annotated method, we added streaming responses, persistent memory, browser automation, and code execution—all through known Spring patterns.The SDK is an open source under the Apache 2.0 license. To get started:

We welcome your feedback and contributions. Leave a comment to share your experience or open an issue on the GitHub repository.

Step 6: Add memory to your agent

Strategy

Purpose

Example

Semantic

Factual information about users

“User works in finance”

User preference

Explicit settings and choices

“Metric units preferred”

Summary

Condensed conversation history

Session summaries for continuity

Episodic

Past interactions and lessons

“User had trouble with X last week”

Step 7: Extending agents with tools

ToolCallbackProvider interface. Here is the final MyAgent with memory, browser, and code interpreter composed together:

Deploying your agent

Authentication and authorization

AgentCoreContext. Fine-grained authorization can then be implemented in your Spring application using standard Spring Security patterns with these principles. For standalone deployments, your Spring application is responsible for providing authentication and authorization using Spring Security. In this case, calls to AgentCore services (Memory, Browser, Code Interpreter) are secured using standard AWS SDK credential mechanisms.

Connecting to MCP tools with AgentCore Gateway

What’s next?

Cleaning up

Conclusion

simple-spring-boot-app — Minimal agent with basic request handlingspring-ai-sse-chat-client — Streaming responses with Server-Sent Eventsspring-ai-memory-integration — Short-term and long-term memory usagespring-ai-extended-chat-client — OAuth authentication with per-user memory isolationspring-ai-browser — Web browsing and screenshot capabilities

About the authors

![KAIZEN Locations & Map Guide – Boss, Chest, Weapon, Style [KASHIMO]](https://www.destructoid.com/wp-content/uploads/2026/02/kaizen-locations-and-map-guide.jpg)