Automate Amazon Bedrock batch inference: Building a scalable and efficient pipeline

Although batch inference offers numerous benefits, it’s limited to 10 batch inference jobs submitted per model per Region. To address this consideration and enhance your use of batch inference, we’ve developed a scalable solution using AWS Lambda and Amazon DynamoDB. This post guides you through implementing a queue management system that automatically monitors available job slots and submits new jobs as slots become available.

Amazon Bedrock is a fully managed service that offers a choice of high-performing foundation models (FMs) from leading AI companies such as AI21 Labs, Anthropic, Cohere, Meta, Mistral AI, Stability AI, and Amazon through a single API, along with a broad set of capabilities you need to build generative AI applications with security, privacy, and responsible AI.

Batch inference in Amazon Bedrock efficiently processes large volumes of data using foundation models (FMs) when real-time results aren’t necessary. It’s ideal for workloads that aren’t latency sensitive, such as obtaining embeddings, entity extraction, FM-as-judge evaluations, and text categorization and summarization for business reporting tasks. A key advantage is its cost-effectiveness, with batch inference workloads charged at a 50% discount compared to On-Demand pricing. Refer to Supported Regions and models for batch inference for current supporting AWS Regions and models.

Although batch inference offers numerous benefits, it’s limited to 10 batch inference jobs submitted per model per Region. To address this consideration and enhance your use of batch inference, we’ve developed a scalable solution using AWS Lambda and Amazon DynamoDB. This post guides you through implementing a queue management system that automatically monitors available job slots and submits new jobs as slots become available.

We walk you through our solution, detailing the core logic of the Lambda functions. By the end, you’ll understand how to implement this solution so you can maximize the efficiency of your batch inference workflows on Amazon Bedrock. For instructions on how to start your Amazon Bedrock batch inference job, refer to Enhance call center efficiency using batch inference for transcript summarization with Amazon Bedrock.

The power of batch inference

Organizations can use batch inference to process large volumes of data asynchronously, making it ideal for scenarios where real-time results are not critical. This capability is particularly useful for tasks such as asynchronous embedding generation, large-scale text classification, and bulk content analysis. For instance, businesses can use batch inference to generate embeddings for vast document collections, classify extensive datasets, or analyze substantial amounts of user-generated content efficiently.

One of the key advantages of batch inference is its cost-effectiveness. Amazon Bedrock offers select FMs for batch inference at 50% of the On-Demand inference price. Organizations can process large datasets more economically because of this significant cost reduction, making it an attractive option for businesses looking to optimize their generative AI processing expenses while maintaining the ability to handle substantial data volumes.

Solution overview

The solution presented in this post uses batch inference in Amazon Bedrock to process many requests efficiently using the following solution architecture.

This architecture workflow includes the following steps:

- A user uploads files to be processed to an Amazon Simple Storage Service (Amazon S3) bucket

br-batch-inference-{Account_Id}-{AWS-Region}in the to-process folder. Amazon S3 invokes the{stack_name}-create-batch-queue-{AWS-Region}Lambda function. - The invoked Lambda function creates new job entries in a DynamoDB table with the status as Pending. The DynamoDB table is crucial for tracking and managing the batch inference jobs throughout their lifecycle. It stores information such as job ID, status, creation time, and other metadata.

- The Amazon EventBridge rule scheduled to run every 15 minutes invokes the

{stack_name}-process-batch-jobs-{AWS-Region}Lambda function. - The

{stack_name}-process-batch-jobs-{AWS-Region}Lambda function performs several key tasks:- Scans the DynamoDB table for jobs in

InProgress,Submitted,ValidationandScheduledstatus - Updates job status in DynamoDB based on the latest information from Amazon Bedrock

- Calculates available job slots and submits new jobs from the

Pendingqueue if slots are available - Handles error scenarios by updating job status to

Failedand logging error details for troubleshooting

- Scans the DynamoDB table for jobs in

- The Lambda function makes the GetModelInvocationJob API call to get the latest status of the batch inference jobs from Amazon Bedrock

- The Lambda function then updates the status of the jobs in DynamoDB using the UpdateItem API call, making sure that the table always reflects the most current state of each job

- The Lambda function calculates the number of available slots before the Service Quota Limit for batch inference jobs is reached. Based on this, it queries for jobs in the Pending state that can be submitted

- If there is a slot available, the Lambda function will make CreateModelInvocationJob API calls to create new batch inference jobs for the pending jobs

- It updates the DynamoDB table with the status of the batch inference jobs created in the previous step

- After one batch job is complete, its output files will be available in the S3 bucket

br-batch-inference-{Account_Id}-{AWS-Region}processed folder

Prerequisites

To perform the solution, you need the following prerequisites:

- An active AWS account.

- An AWS Region from the list of batch inference supported Regions for Amazon Bedrock.

- Access to your selected models hosted on Amazon Bedrock. Make sure the selected model has been enabled in Amazon Bedrock.

- If you plan to use your own AWS Identity and Access Management (IAM) role for batch inference, create it with a trust policy and Amazon S3 access (read access to the folder containing input data and write access to the folder storing output data).

Deployment guide

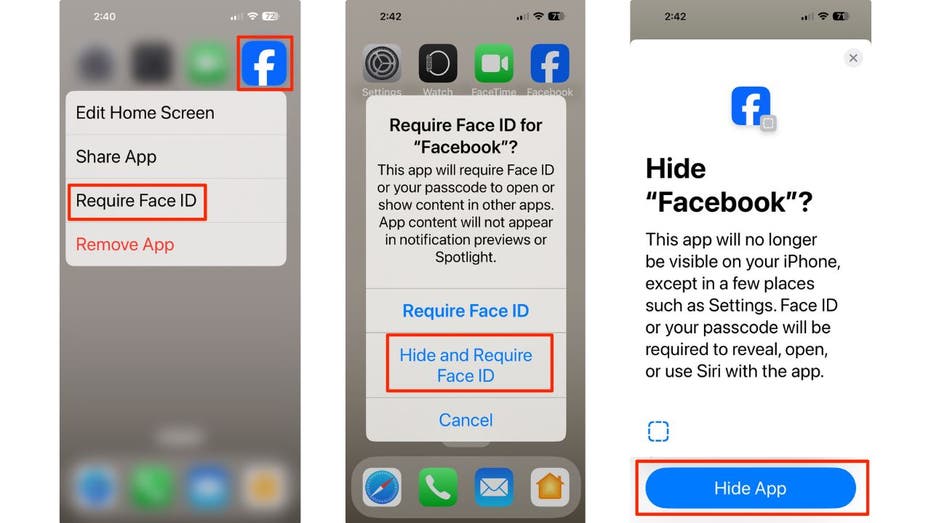

To deploy the pipeline, complete the following steps:

- Choose the Launch Stack button:

- Choose Next, as shown in the following screenshot

- Specify the pipeline details with the options fitting your use case:

- Stack name (Required) – The name you specified for this AWS CloudFormation. The name must be unique in the region in which you’re creating it.

- ModelId (Required) – Provide the model ID that you need your batch job to run with.

- RoleArn (Optional) – By default, the CloudFormation stack will deploy a new IAM role with the required permissions. If you have a role you want to use instead of creating a new role, provide the IAM role Amazon Resource Name (ARN) that has sufficient permission to create a batch inference job in Amazon Bedrock and read/write in the created S3 bucket

br-batch-inference-{Account_Id}-{AWS-Region}. Follow the instructions in the prerequisites section to create this role.

- In the Amazon Configure stack options section, add optional tags, permissions, and other advanced settings if needed. Or you can just leave it blank and choose Next, as shown in the following screenshot.

- Review the stack details and select I acknowledge that AWS CloudFormation might create AWS IAM resources, as shown in the following screenshot.

- Choose Submit. This initiates the pipeline deployment in your AWS account.

- After the stack is deployed successfully, you can start using the pipeline. First, create a /to-process folder under the created Amazon S3 location for input. A .jsonl uploaded to this folder will have a batch job created with the selected model. The following is a screenshot of the DynamoDB table where you can track the job status and other types of metadata related to the job.

- After your first batch job from the pipeline is complete, the pipeline will create a /processed folder under the same bucket, as shown in the following screenshot. Outputs from the batch jobs created by this pipeline will be stored in this folder.

- To start using this pipeline, upload the

.jsonlfiles you’ve prepared for batch inference in Amazon Bedrock

You’re done! You’ve successfully deployed your pipeline and you can check the batch job status in the Amazon Bedrock console. If you want to have more insights about each .jsonl file’s status, navigate to the created DynamoDB table {StackName}-DynamoDBTable-{UniqueString} and check the status there. You may need to wait up to 15 minutes to observe the batch jobs created because EventBridge is scheduled to scan DynamoDB every 15 minutes.

Clean up

If you no longer need this automated pipeline, follow these steps to delete the resources it created to avoid additional cost:

- On the Amazon S3 console, manually delete the contents inside buckets. Make sure the bucket is empty before moving to step 2.

- On the AWS CloudFormation console, choose Stacks in the navigation pane.

- Select the created stack and choose Delete, as shown in the following screenshot.

This automatically deletes the deployed stack.

Conclusion

In this post, we’ve introduced a scalable and efficient solution for automating batch inference jobs in Amazon Bedrock. By using AWS Lambda, Amazon DynamoDB, and Amazon EventBridge, we’ve addressed key challenges in managing large-scale batch processing workflows.

This solution offers several significant benefits:

- Automated queue management – Maximizes throughput by dynamically managing job slots and submissions

- Cost optimization – Uses the 50% discount on batch inference pricing for economical large-scale processing

This automated pipeline significantly enhances your ability to process large amounts of data using batch inference for Amazon Bedrock. Whether you’re generating embeddings, classifying text, or analyzing content in bulk, this solution offers a scalable, efficient, and cost-effective approach to batch inference.

As you implement this solution, remember to regularly review and optimize your configuration based on your specific workload patterns and requirements. With this automated pipeline and the power of Amazon Bedrock, you’re well-equipped to tackle large-scale AI inference tasks efficiently and effectively. We encourage you to try it out and share your feedback to help us continually improve this solution.

For additional resources, refer to the following:

- User guide – Process multiple prompts with batch inference

- Code sample – Sample for building your batch inference job

- Blog post – Enhance call center efficiency using batch inference for transcript summarization with Amazon Bedrock

About the authors

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Yanyan Zhang is a Senior Generative AI Data Scientist at Amazon Web Services, where she has been working on cutting-edge AI/ML technologies as a Generative AI Specialist, helping customers use generative AI to achieve their desired outcomes. Yanyan graduated from Texas A&M University with a PhD in Electrical Engineering. Outside of work, she loves traveling, working out, and exploring new things.

Ishan Singh is a Generative AI Data Scientist at Amazon Web Services, where he helps customers build innovative and responsible generative AI solutions and products. With a strong background in AI/ML, Ishan specializes in building Generative AI solutions that drive business value. Outside of work, he enjoys playing volleyball, exploring local bike trails, and spending time with his wife and dog, Beau.

Ishan Singh is a Generative AI Data Scientist at Amazon Web Services, where he helps customers build innovative and responsible generative AI solutions and products. With a strong background in AI/ML, Ishan specializes in building Generative AI solutions that drive business value. Outside of work, he enjoys playing volleyball, exploring local bike trails, and spending time with his wife and dog, Beau.

Neeraj Lamba is a Cloud Infrastructure Architect with Amazon Web Services (AWS) Worldwide Public Sector Professional Services. He helps customers transform their business by helping design their cloud solutions and offering technical guidance. Outside of work, he likes to travel, play Tennis and experimenting with new technologies.

Neeraj Lamba is a Cloud Infrastructure Architect with Amazon Web Services (AWS) Worldwide Public Sector Professional Services. He helps customers transform their business by helping design their cloud solutions and offering technical guidance. Outside of work, he likes to travel, play Tennis and experimenting with new technologies.

![[PRO Tips] Use the BCG matrix to help you analyze the current situation, product positioning, and formulate strategies](https://i.scdn.co/image/ab6765630000ba8a165b48c48c4321b36a1df7b9?#)

![[Business Talk] BYD's Hiring Standards: A Reflection of China's Competitive Job Market](https://i.scdn.co/image/ab6765630000ba8a1a1e0af3aefae3a685793e7c?#)

![[PRO Tips] What is ESG? How is it different from CSR and SDGs? 3 keywords that companies and investors should know](https://i.scdn.co/image/ab6765630000ba8a76dbe129993a62e85226c2b4?#)

![[Business Talk] Elon Musk](https://i.scdn.co/image/ab6765630000ba8ac91eb094519def31d2b67898?#)